Have a Personal AI Supercomputer at your desk. Plug-and-play AI workstations powered by the latest NVIDIA RTX 5090, RTX PRO 6000 Blackwell, H200, and B200 GPUs, pre-installed with AI frameworks, LLM tools, and custom water cooling. Optimized for local LLM inference, AI training, fine-tuning, and deep learning research.

When you buy a BIZON workstation, you become part of an ecosystem built for AI engineers and researchers. BIZON systems come with pre-installed Ubuntu 24.04 LTS, a full AI software stack including PyTorch, TensorFlow, CUDA, cuDNN, Docker, vLLM, Ollama, Hugging Face Transformers, and NVIDIA drivers optimized and tested on BIZON hardware. In addition, BizonOS includes BIZON Apps — built-in tools for GPU benchmarks, monitoring, optimization, and the Z-Stack framework manager for one-click library updates.

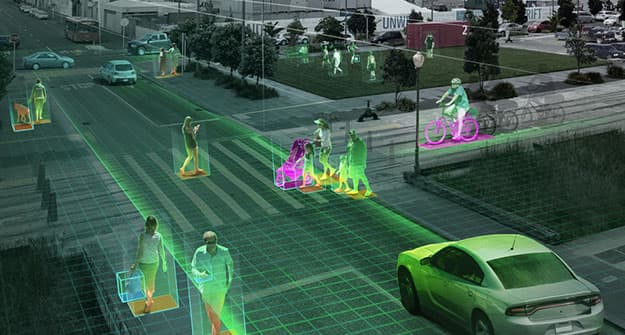

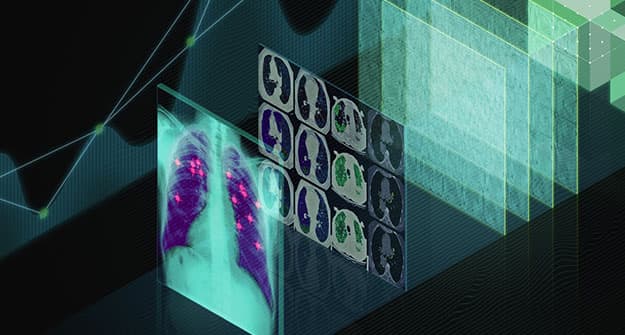

500+ top universities and companies from a wide range of industries trust BIZON's AI and deep learning GPU solutions. Our team of AI engineers is trained to provide the best purchasing experience for our valued customers.

Plug and Play

Plug-and-play setup that takes you from power-on to AI training in minutes. BIZON systems ship fully configured and turnkey — pre-installed OS, drivers, frameworks, and Docker containers ready to go.

Technical Support from AI Engineers

Each BIZON workstation is backed by lifetime expert care and a warranty of up to 5 years. Our technical support team is highly knowledgeable in AI/ML frameworks, CUDA, Docker, SLURM, and Linux/Ubuntu administration — not just basic hardware support.

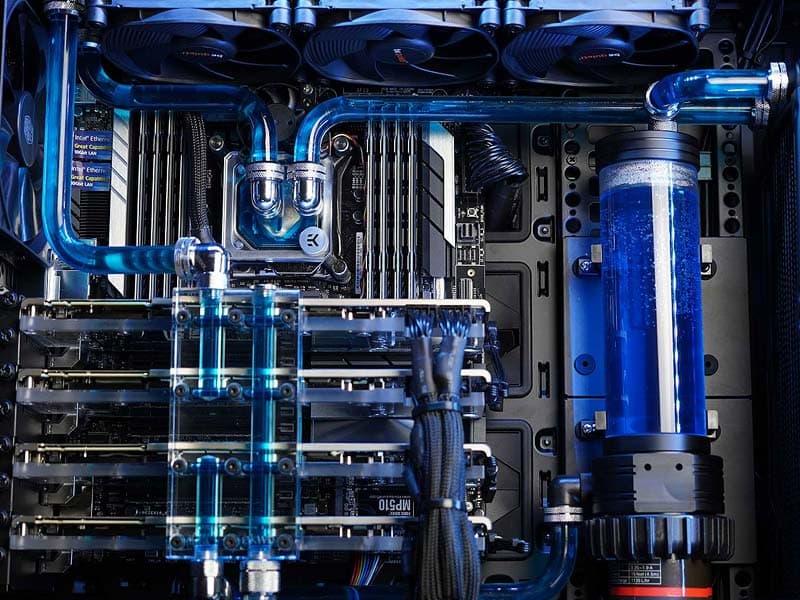

Water-Cooled and Powered by the Latest Hardware

Up to 3x lower noise level compared to air-cooled systems. Our custom water-cooling technology keeps multi-GPU systems running at peak performance 24/7. Powered by the latest NVIDIA RTX 5090, RTX PRO 6000 Blackwell, H200, B200 GPUs and AMD EPYC / Intel Xeon processors.

Wide Range of Configurations

From 2-GPU desktop workstations to 8-GPU rackmount servers, we offer fully customizable systems starting at $2,490. BIZON systems are purpose-built for AI training, LLM inference, fine-tuning, and deep learning research.

BizonOS is based on Ubuntu 24.04 LTS and comes pre-installed with GPU-accelerated AI frameworks, NVIDIA drivers, CUDA toolkit, and tools optimized and tested on BIZON workstations and servers. BizonOS includes BIZON Apps — built-in tools for GPU benchmarks, monitoring, optimization, and overclocking. BIZON Apps save you hours on routine operations that would otherwise require command-line expertise. BIZON customers get access to a knowledge base, video guides, and technical support from AI engineers. We minimize the hassle of migration from Windows to Ubuntu.

BIZON workstations come pre-installed with the most popular AI and deep learning frameworks: PyTorch, TensorFlow, CUDA, cuDNN, Docker, Hugging Face Transformers, vLLM, Ollama, RAPIDS, and Anaconda. Our BIZON Z-Stack Tool provides a user-friendly interface for easy framework installations and upgrades. When a new version of any framework is released, you can upgrade with a click of a button — no command line required.

Hardware is nothing without software, especially for AI workloads. Every BIZON system goes through extensive testing for hardware and software compatibility. All components — GPUs, CPUs, memory, storage, and cooling — are tested and tuned to work together immediately with no additional setup.

Customers get access to video guides and a knowledge base covering setup, AI training workflows, Docker, Jupyter notebooks, SLURM cluster management, and more. Whether you're new to GPU computing or an experienced researcher, these resources help you get productive faster.

AI developers deal with repetitive operations daily — library installations, driver updates, GPU monitoring, environment configuration. Most of these require command-line expertise and Linux administration experience. BIZON Apps pack the most common operations into one-click tools: GPU and CPU benchmarks, real-time GPU monitoring, Jupyter notebook launcher, Docker container management, and more.

Today's AI and LLM workloads demand scalable infrastructure. You can start with a single BIZON workstation and scale to a multi-node GPU cluster. Our BIZON Z-Stack software makes deployment seamless — replicate your OS and framework configuration across multiple systems with one click. BIZON engineers are experienced with SLURM workload manager, Kubernetes, and cluster orchestration for distributed AI training.