The Operating System for AI, Deep Learning & Local LLM Inference

When you buy a BIZON workstation, you become part of an ecosystem built for AI engineers and researchers.

BIZON systems come with pre-installed with BizonOS (based on Ubuntu 24.04 LTS), a full AI software stack including PyTorch, TensorFlow, CUDA, cuDNN, Docker, vLLM, Ollama, Hugging Face Transformers, and NVIDIA drivers optimized and tested on BIZON hardware.

In addition, BizonOS includes BIZON Apps, including built-in tools for GPU benchmarks, monitoring, optimization, and the Z-Stack framework manager for one-click library updates.

Everything You Need to Start Developing

BizonOS comes pre-installed with GPU-accelerated frameworks (vLLM for local LLM serving, PyTorch, TensorFlow, CUDA, Docker) plus NVIDIA drivers tuned for BIZON workstations and servers. Start training models and running local LLMs out of the box.

Deep Learning Frameworks

NVIDIA GPU Stack

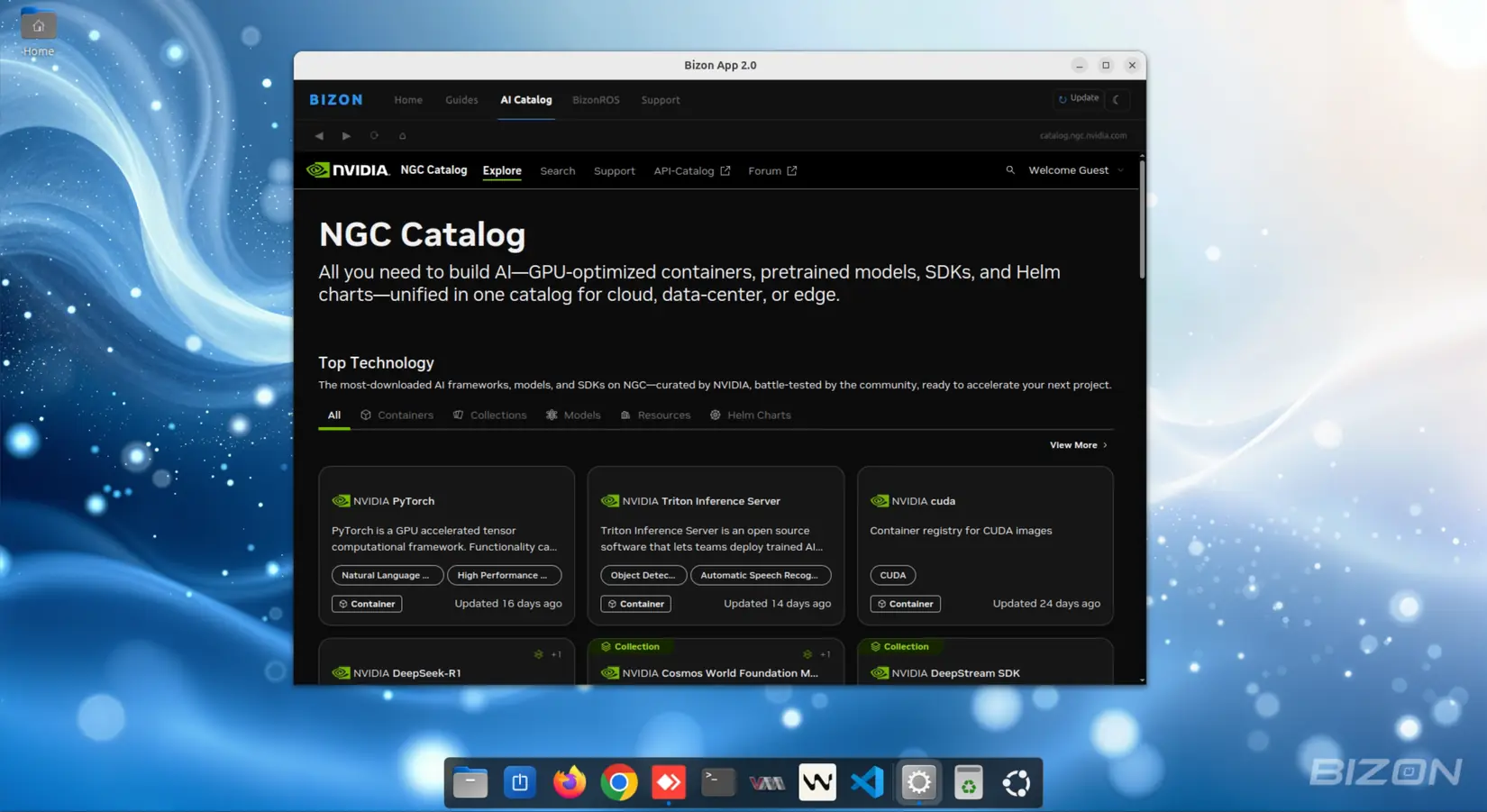

Docker & NGC Containers

Local LLM Inference: vLLM & Ollama

Remote Control Apps

API & MCP Server for AI Agents

Purpose-Built Apps for AI Developers

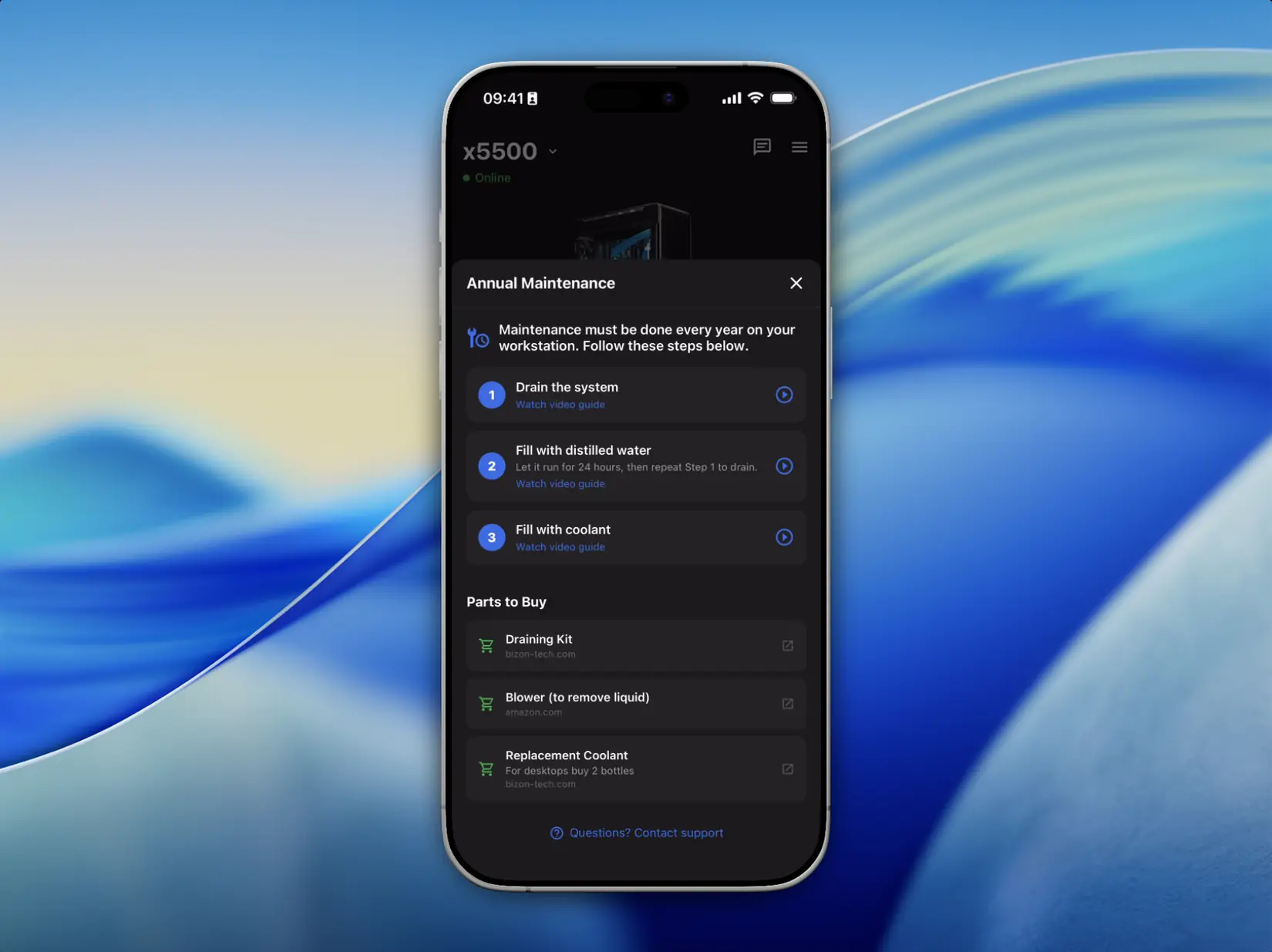

Control, monitor, and diagnose your workstation from a web browser or your iPhone. Securely within your local network. Every BIZON system includes these apps.

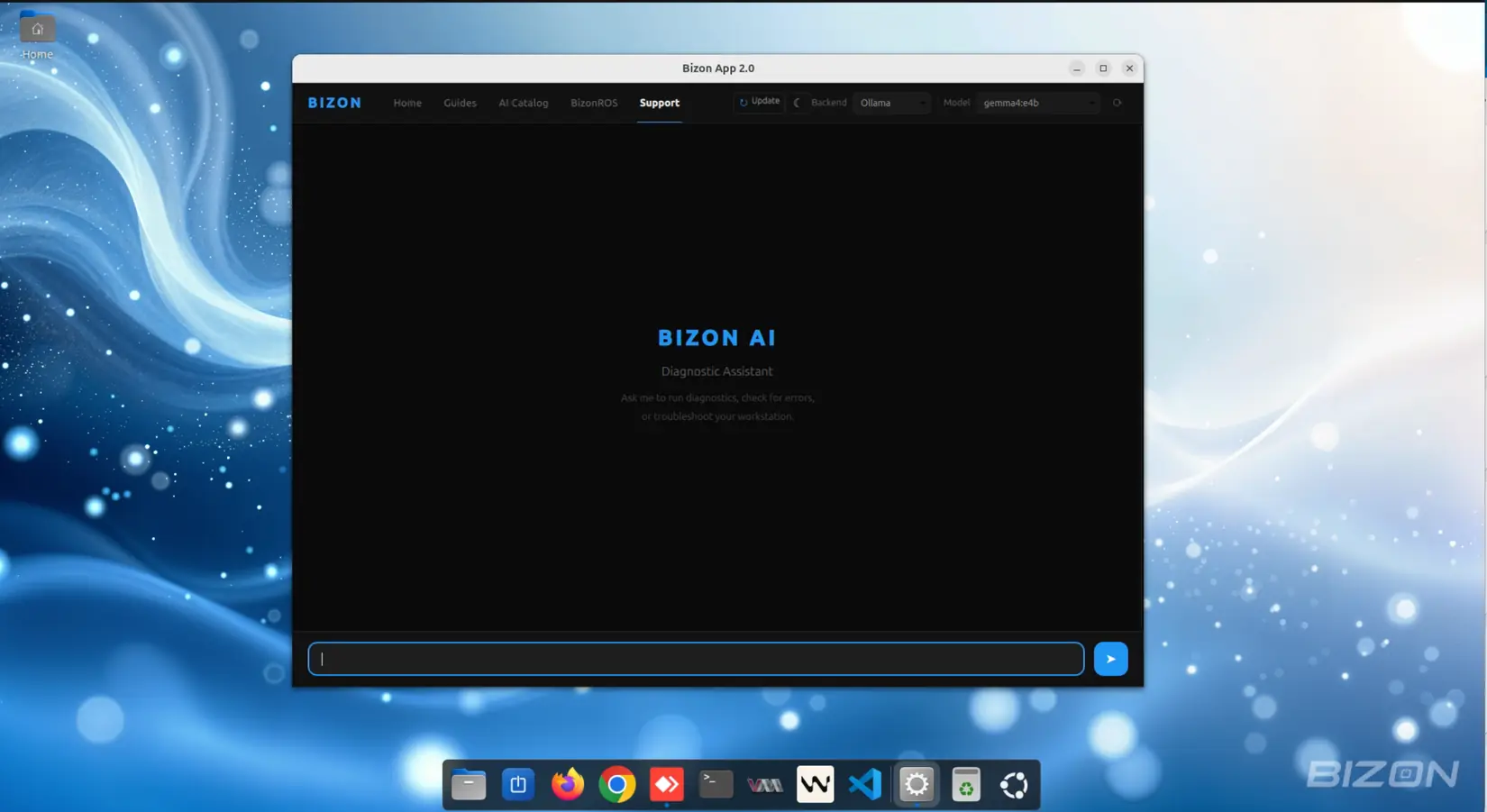

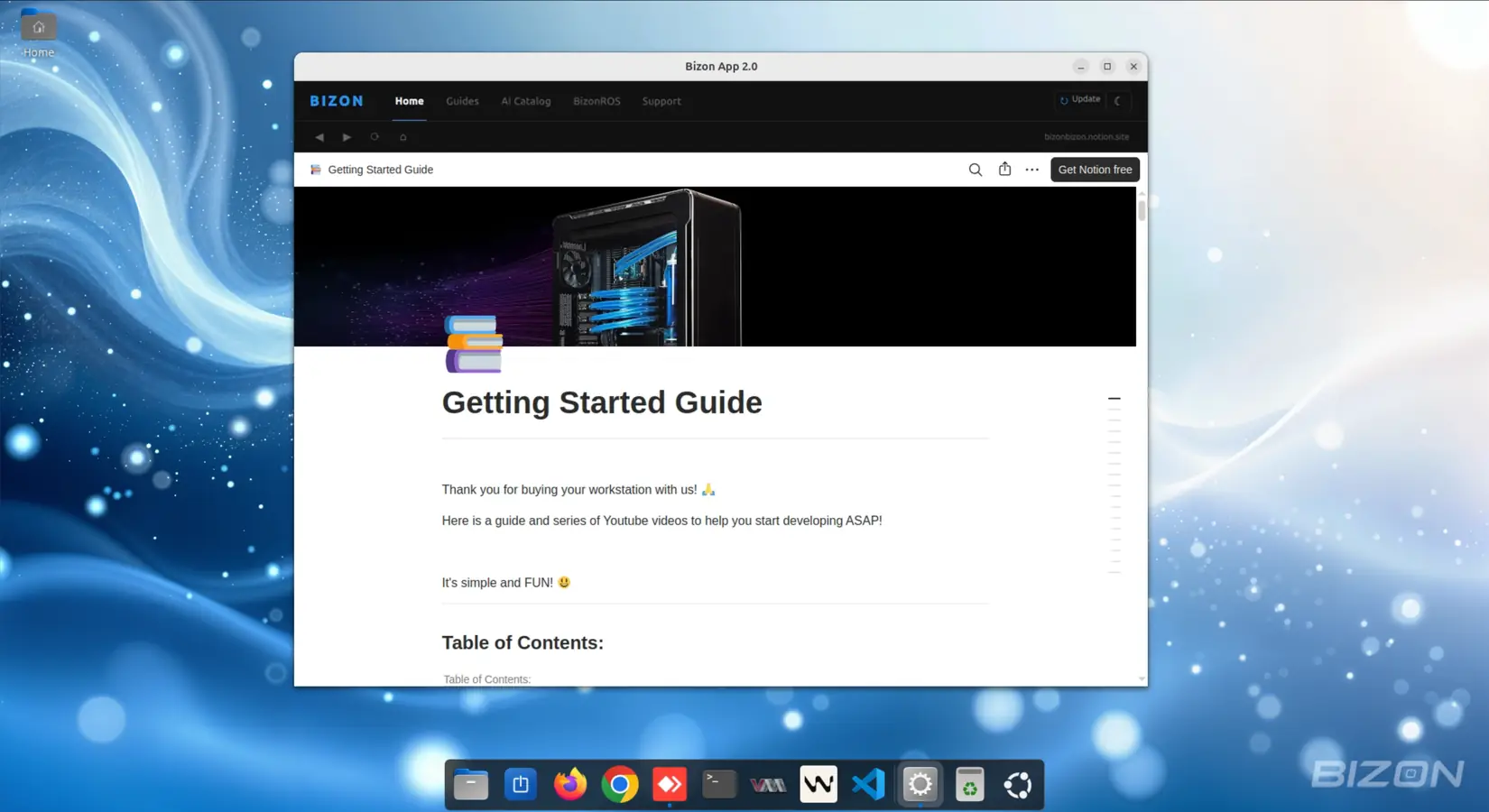

Bizon Desktop App

A native Linux desktop application pre-installed on every BIZON workstation. Your AI-powered welcome hub. Get diagnostics, guides, and support without touching a terminal.

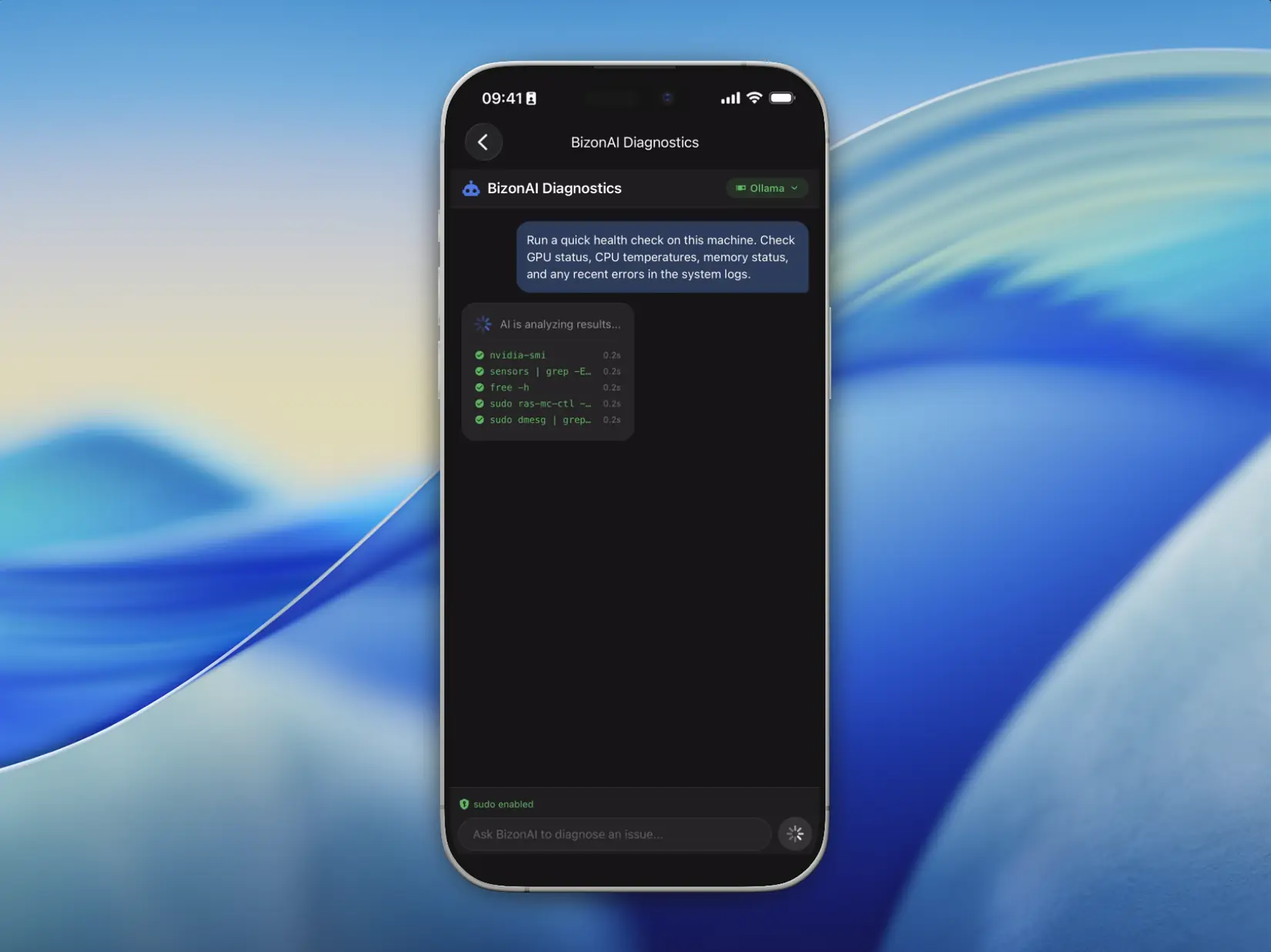

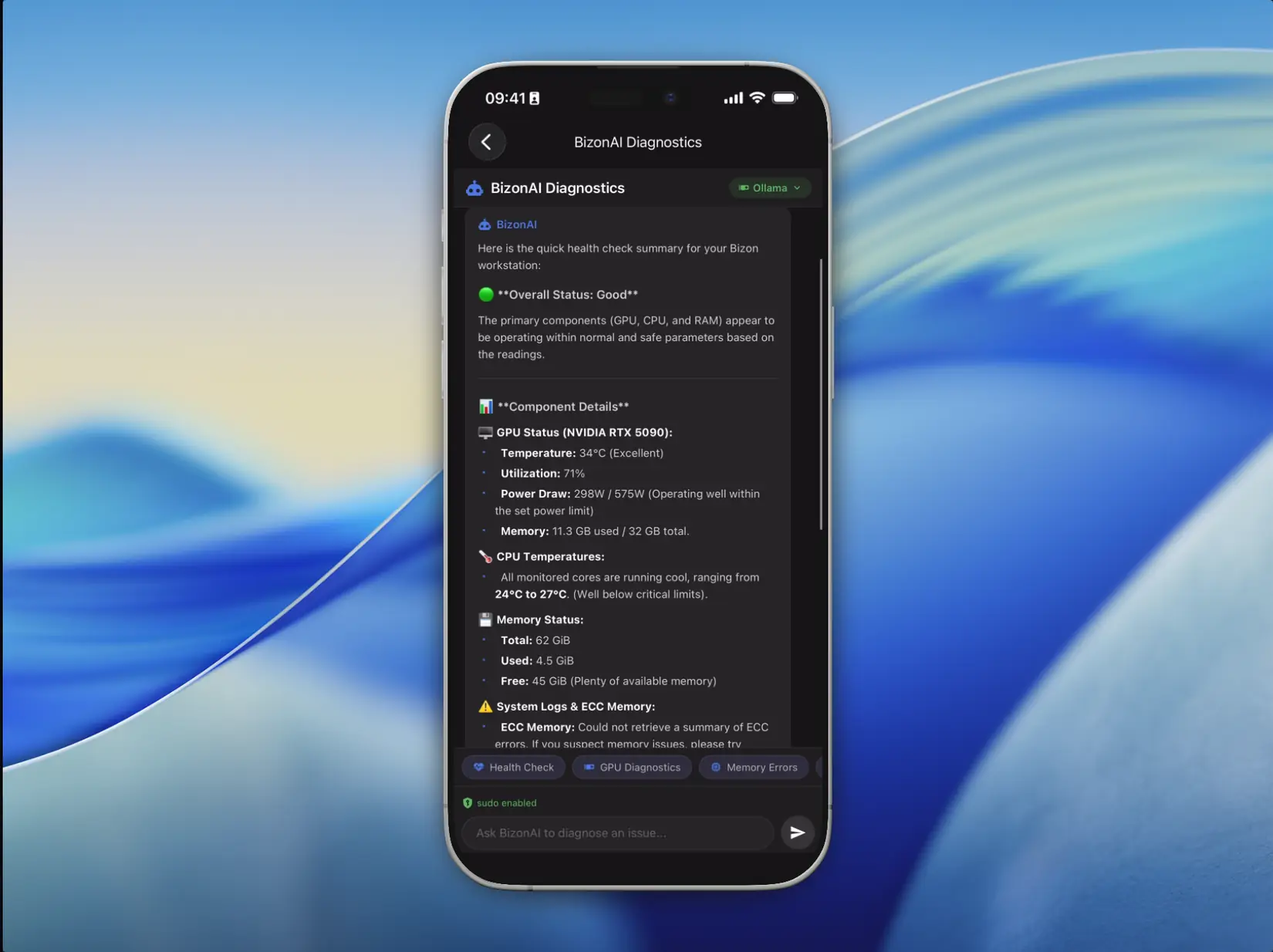

- ✓ AI Diagnostic: conversational assistant with markdown rendering

- ✓ Local AI models run fully on-device. Your data never leaves the workstation

- ✓ Optional Claude backend for cloud-assisted AI support

- ✓ AI tool execution: runs diagnostic commands and reports results

- ✓ Embedded browser: NVIDIA AI Catalog, Notion guides, support resources

- ✓ Dark & Light mode: all components adapt dynamically

- ✓ One-click app updates directly from the interface

- ✓ Customizable system prompt for your AI workflow

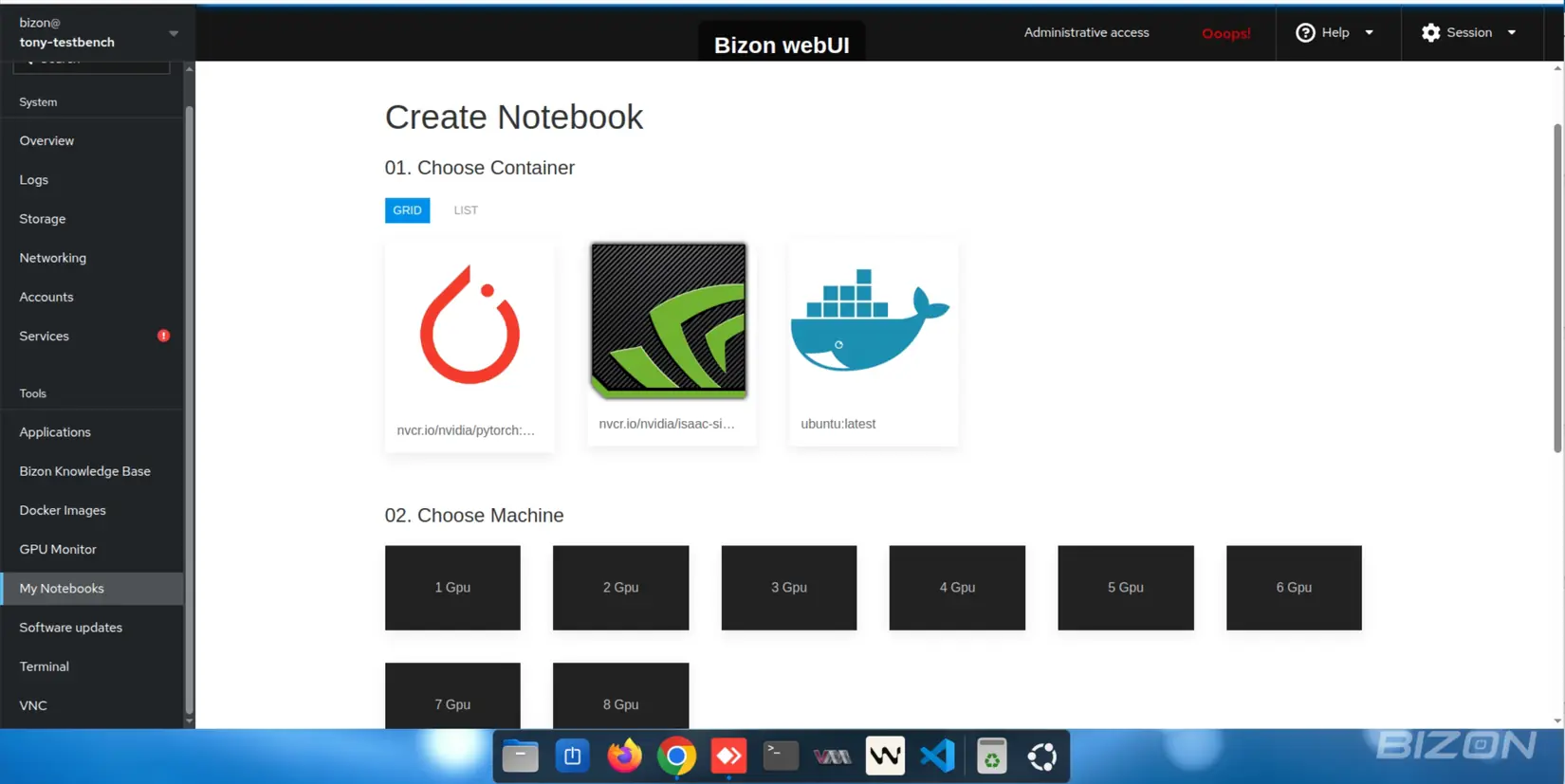

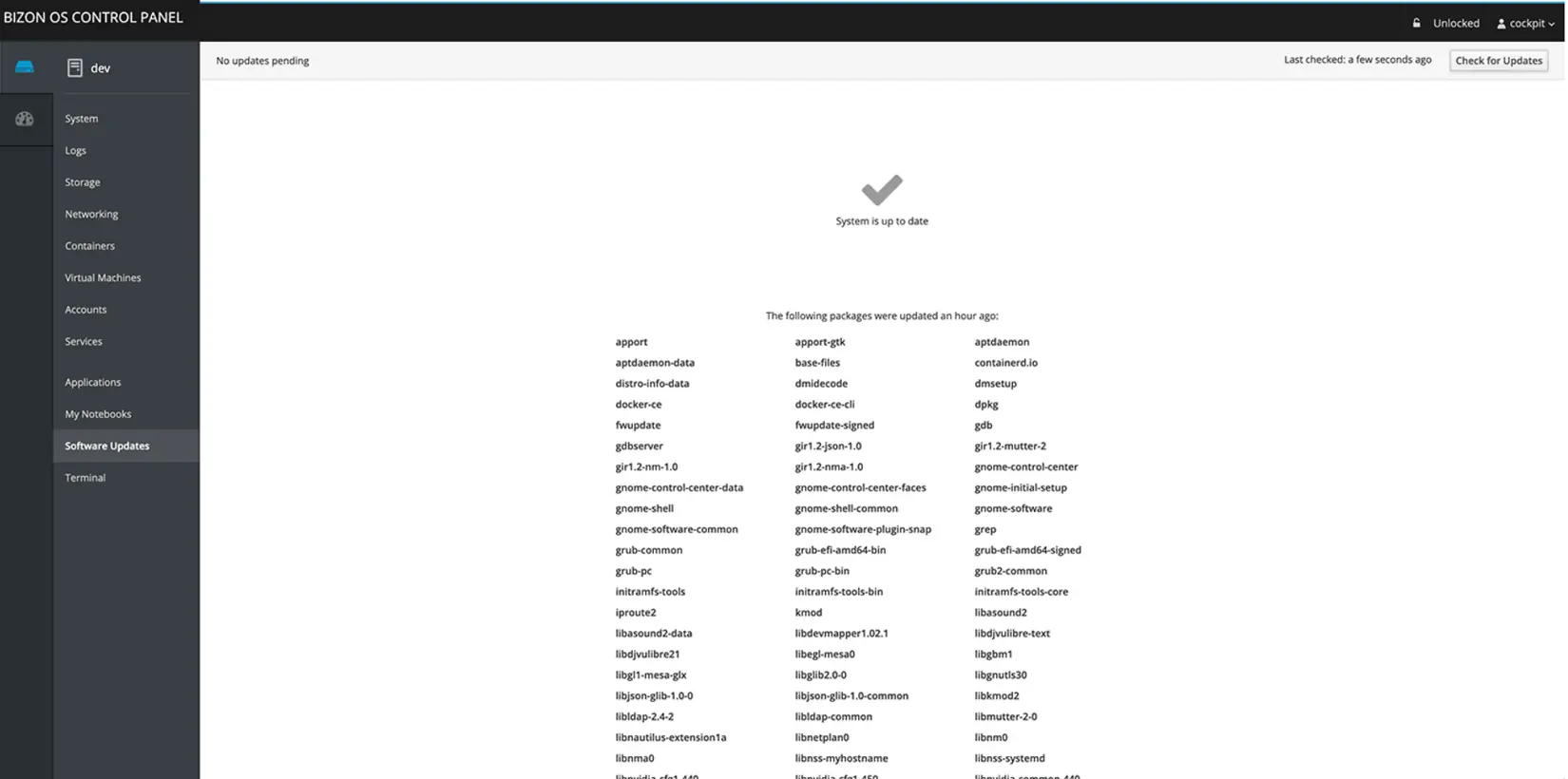

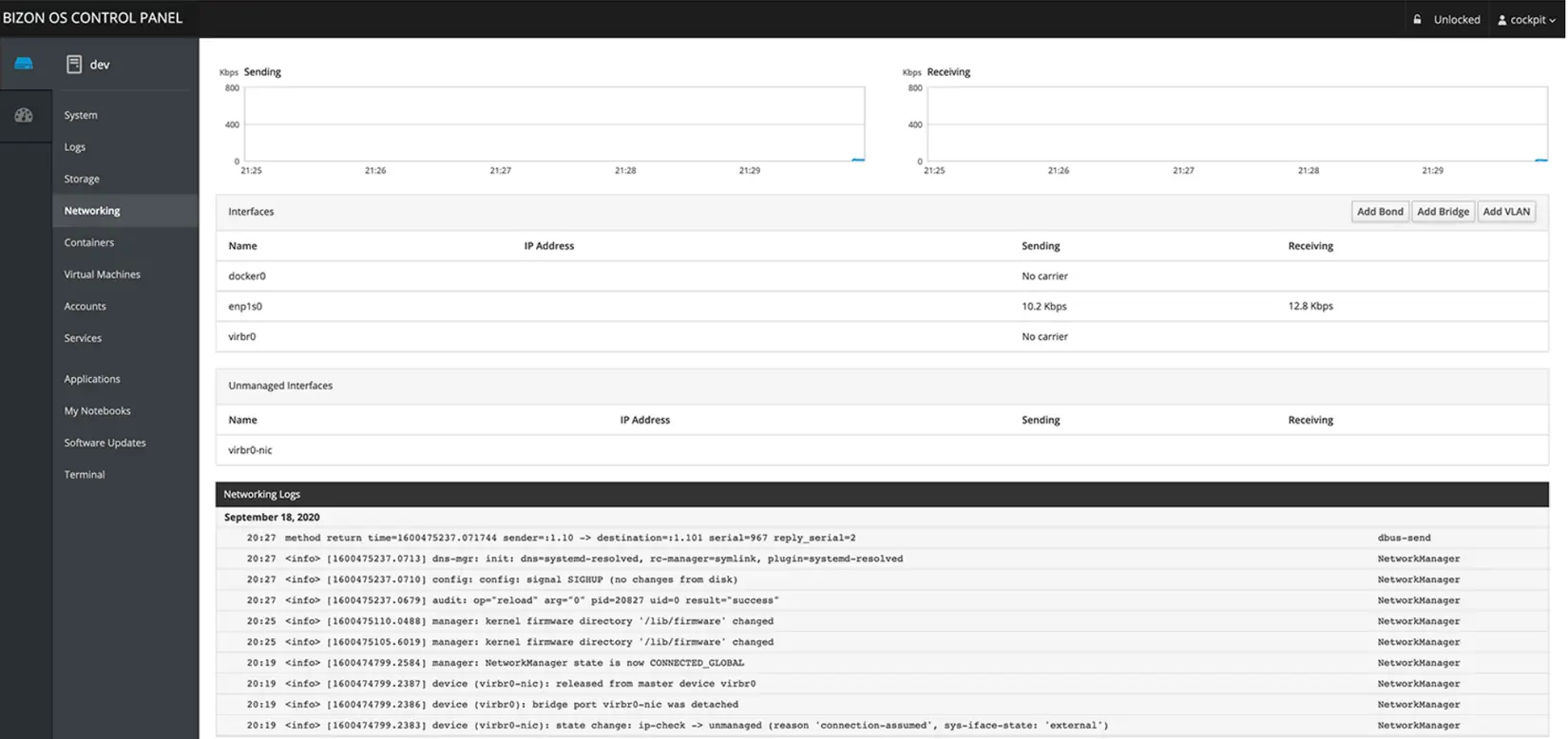

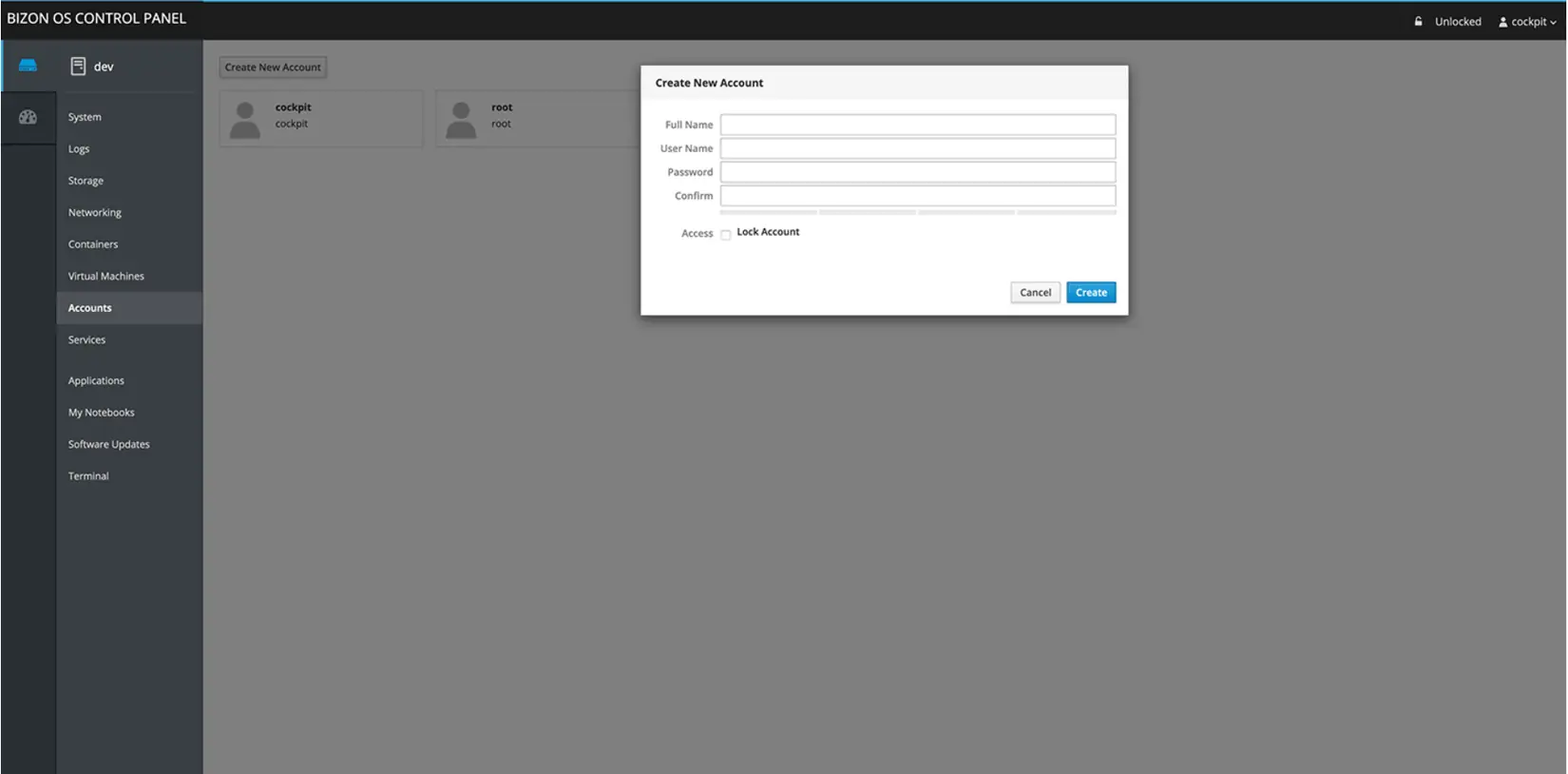

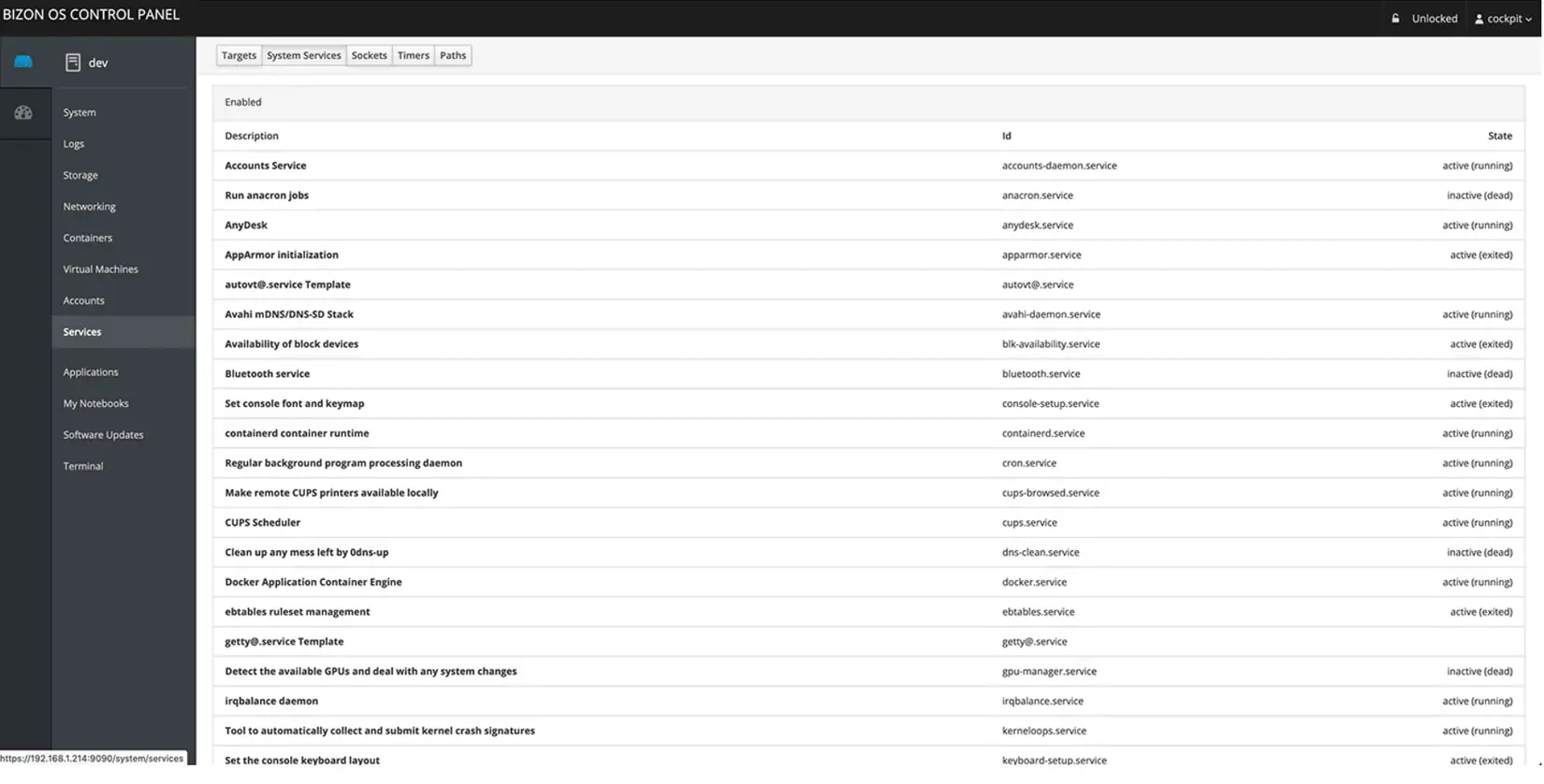

Bizon Control Panel

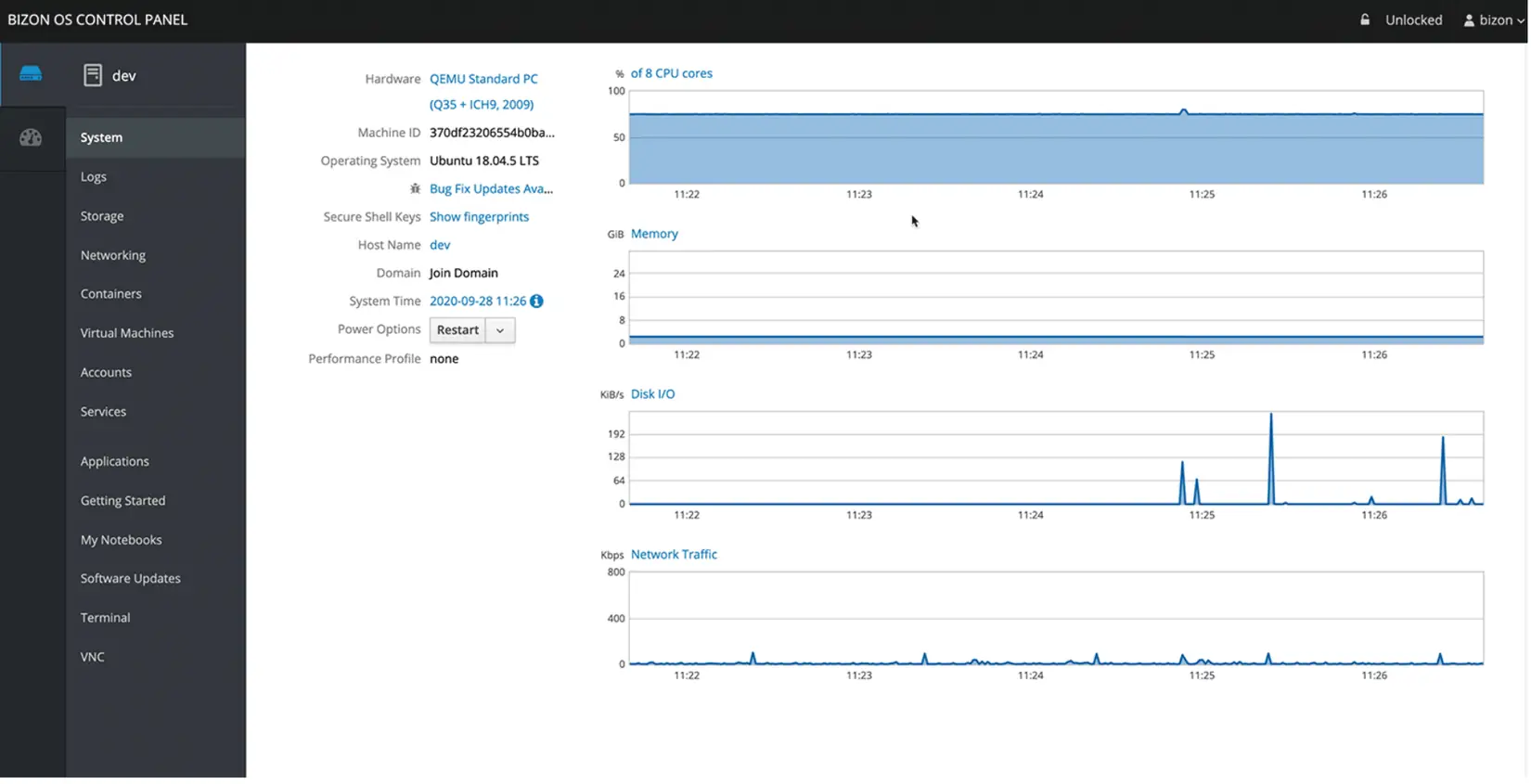

A browser-based control panel running locally on your workstation. Manage every aspect of your system from an intuitive interface. No command line expertise required.

- ✓ Dashboard: Add systems, create clusters, control everything from one place

- ✓ Software Updates: One-click framework and driver updates

- ✓ Terminal: Built-in web terminal with full SSH access

- ✓ Containers: Pull, launch, and manage Docker containers without command lines

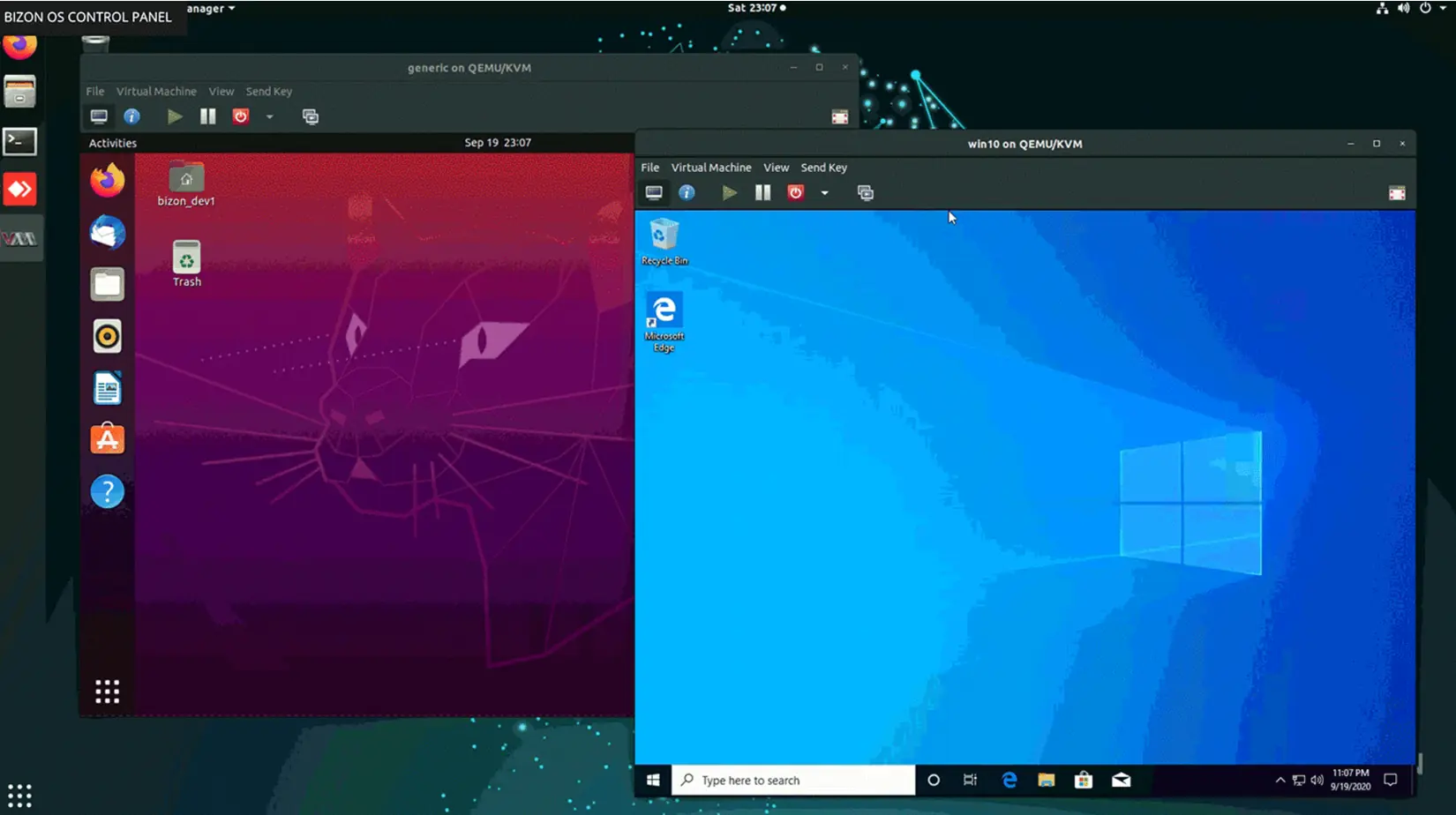

- ✓ Virtual Machines: Create VMs with KVM. Run Linux or Windows inside BizonOS

- ✓ Jupyter Notebooks: Launch GPU notebooks with per-container GPU assignment

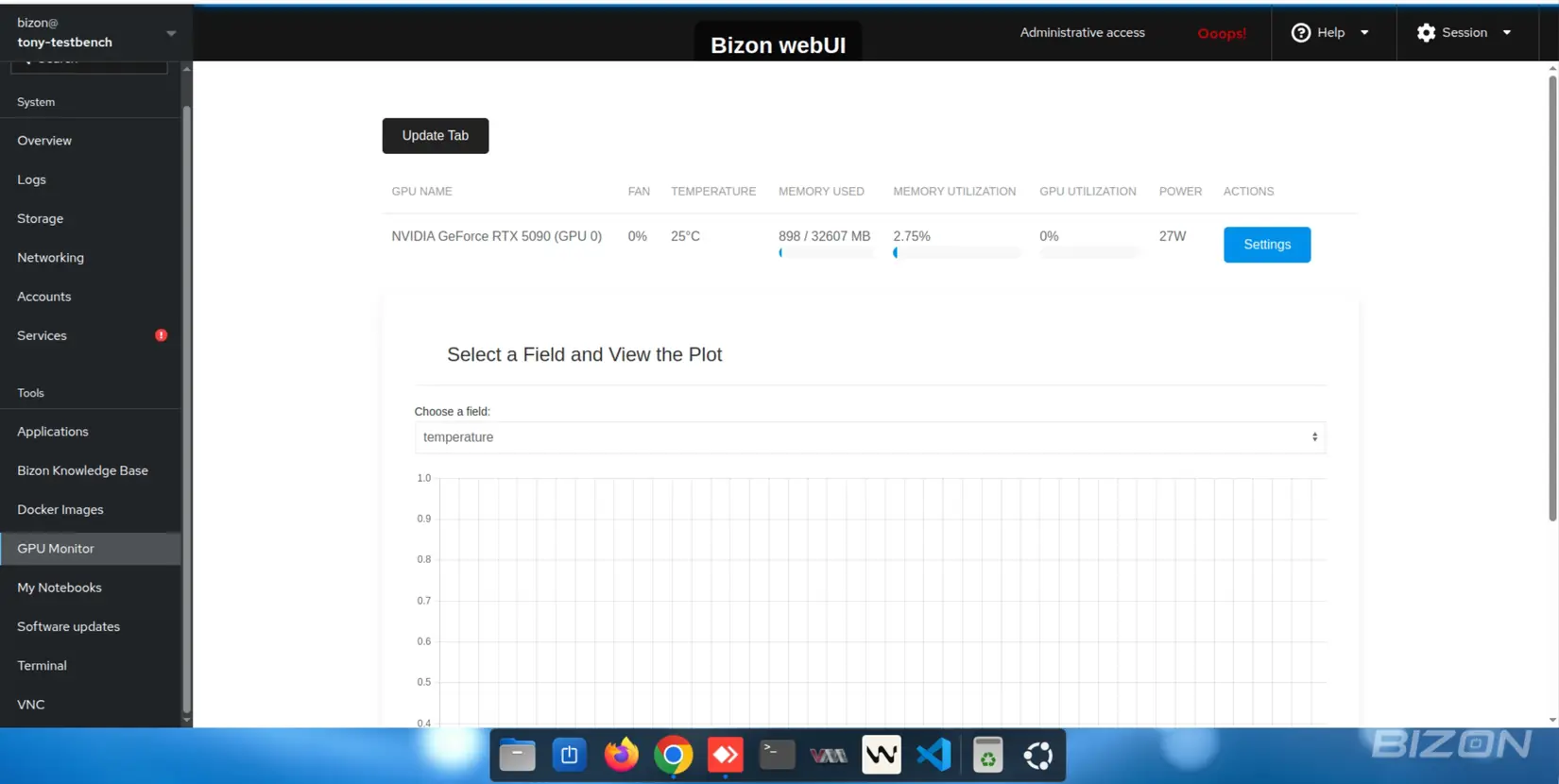

- ✓ GPU Monitoring: Real-time analytics via Grafana & Prometheus

- ✓ VNC Support: One-click GUI access, no monitor required

- ✓ System: Monitor GPU, CPU, RAM, HDD, and network in real-time

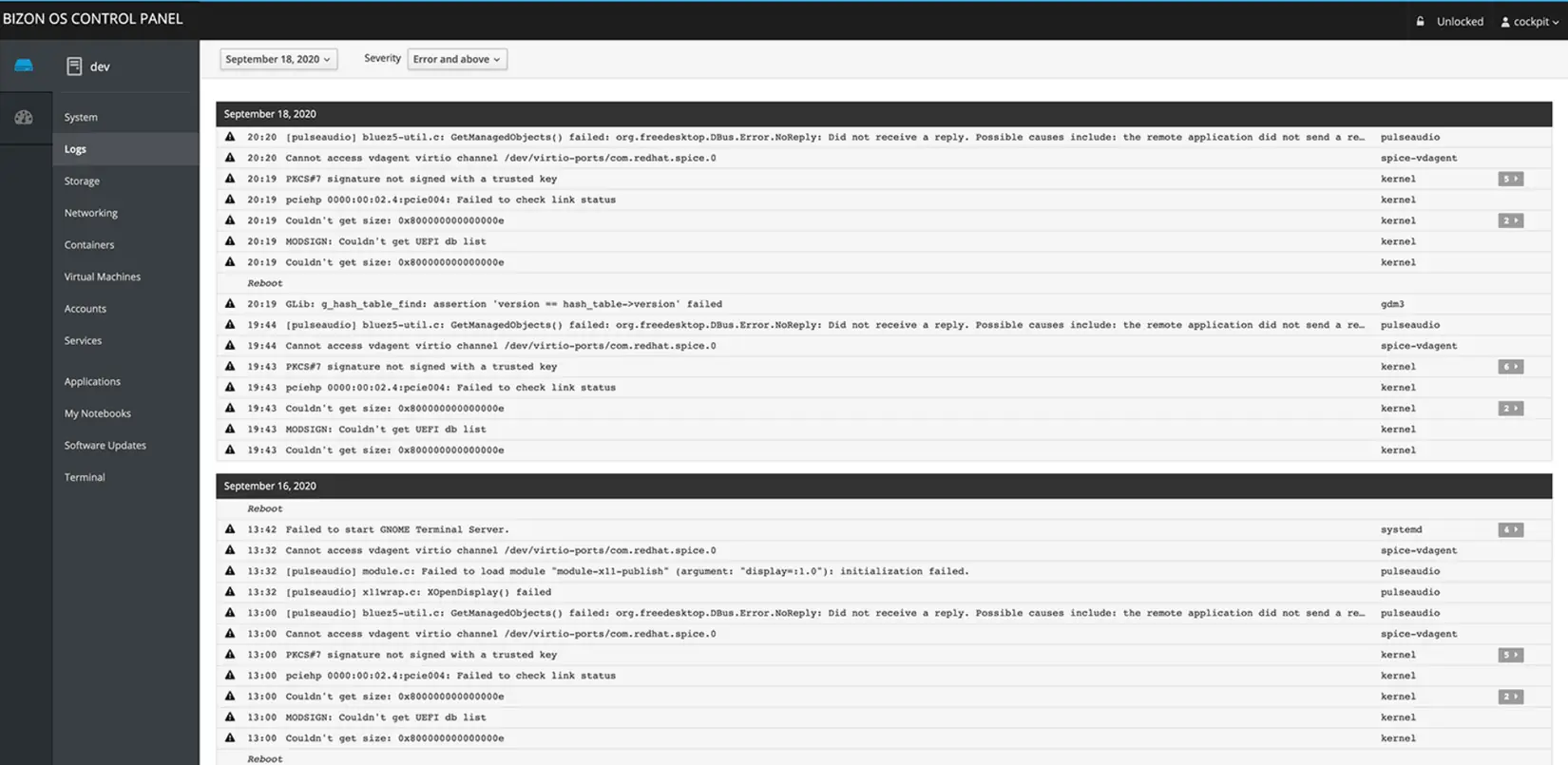

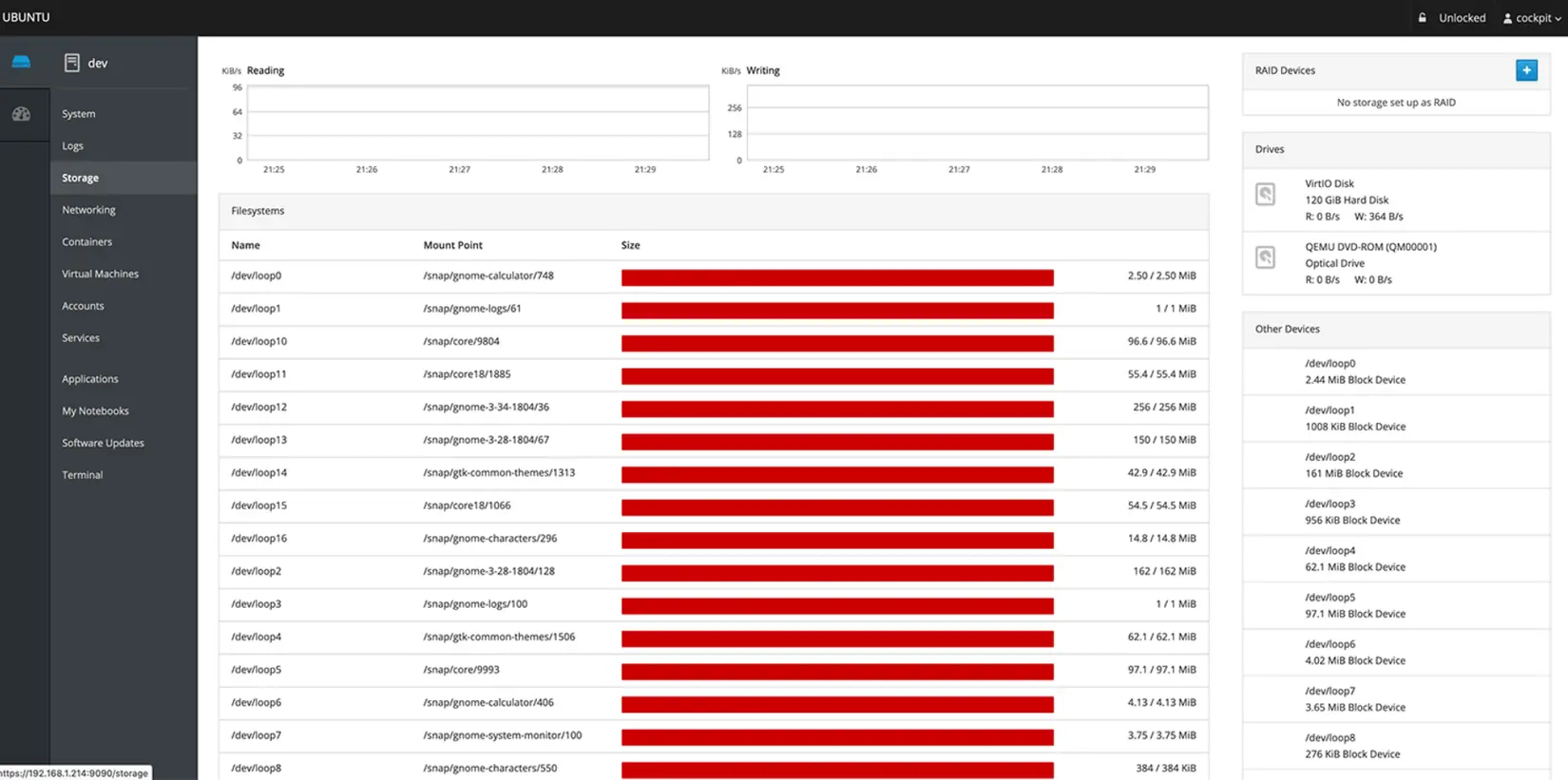

- ✓ Logs, Storage, Networking, Accounts & Services: Full system administration

- ✓ Knowledge Base & Video Guides: Tutorials for Docker, DL/ML, and more

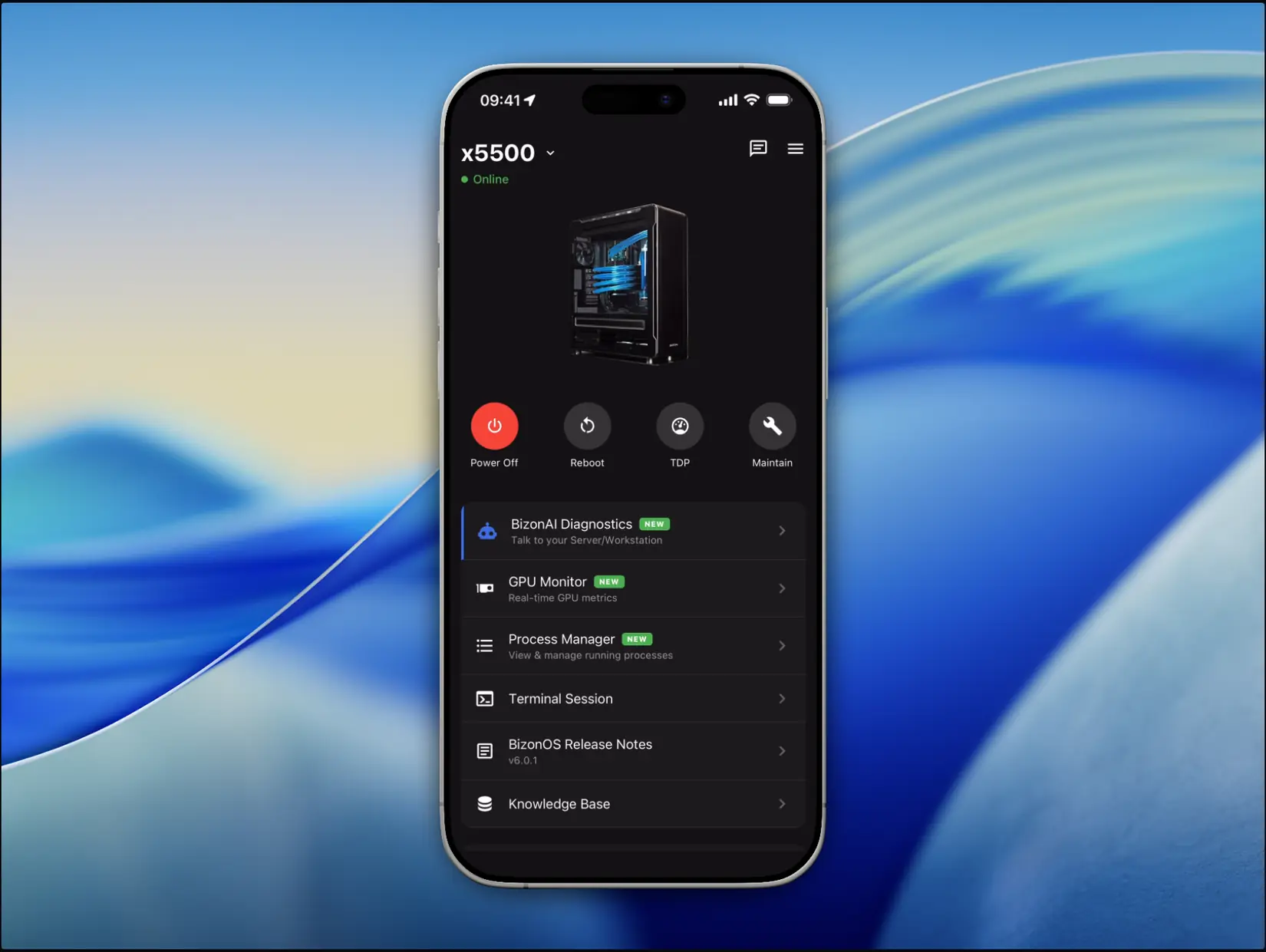

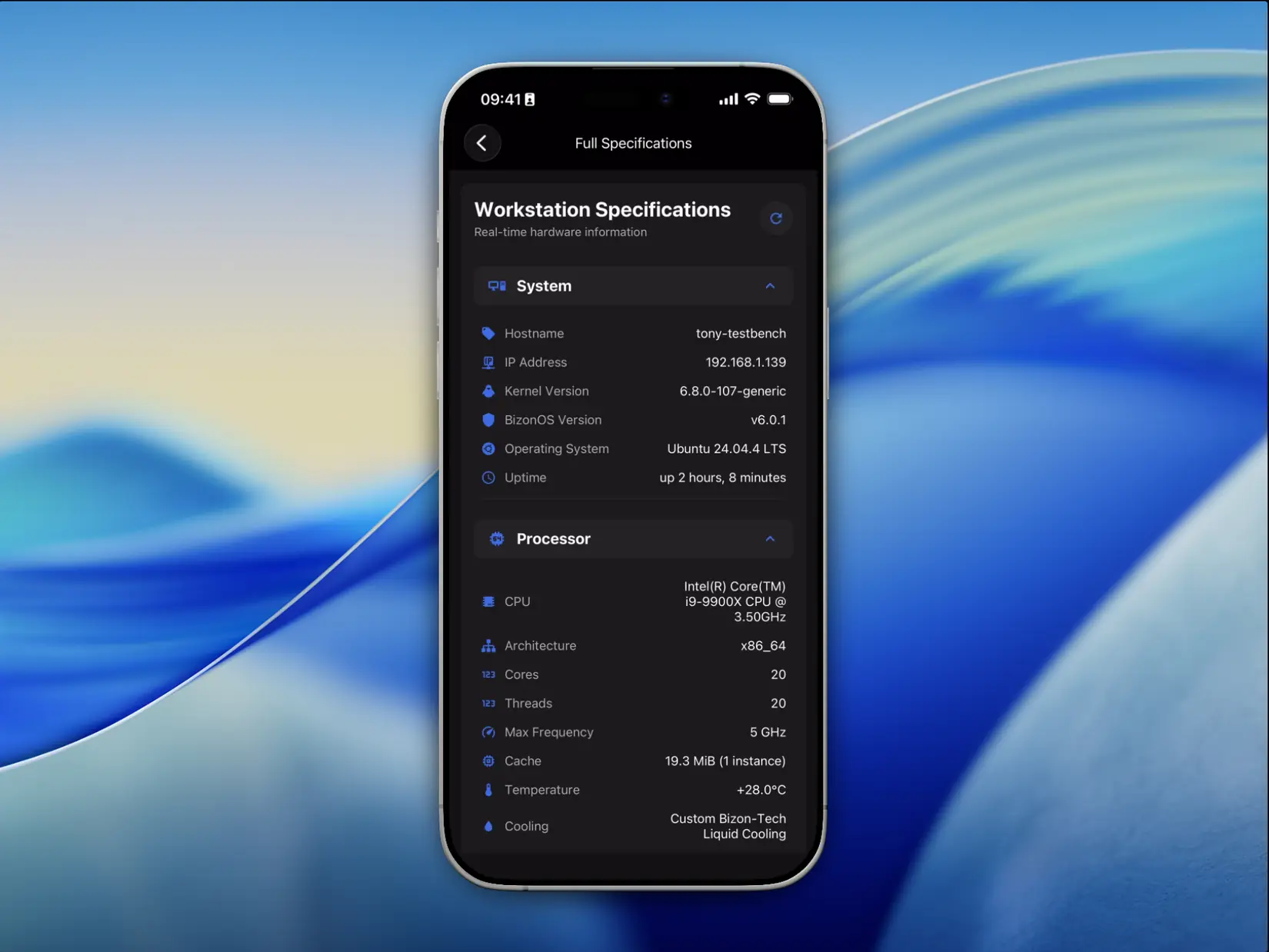

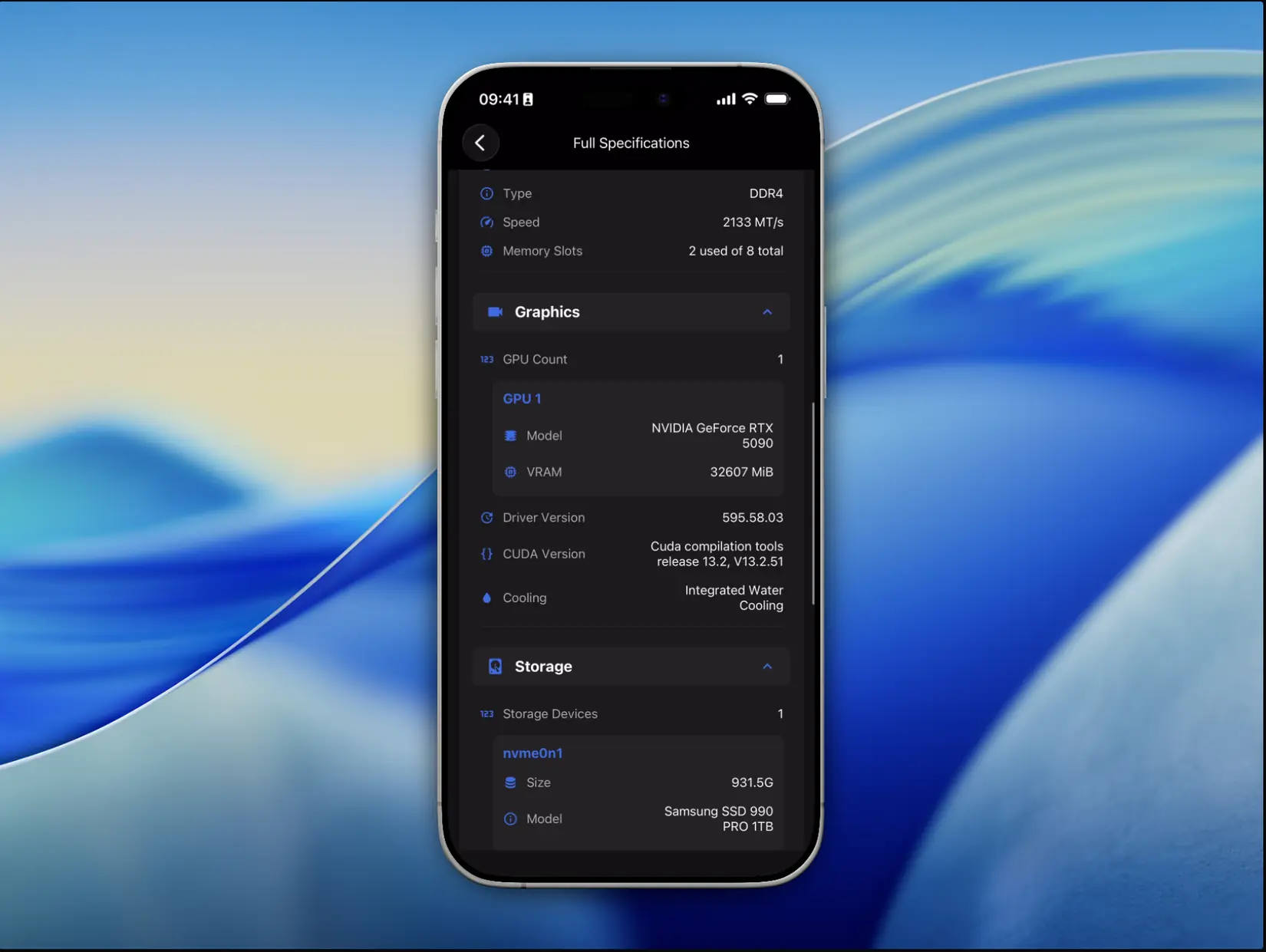

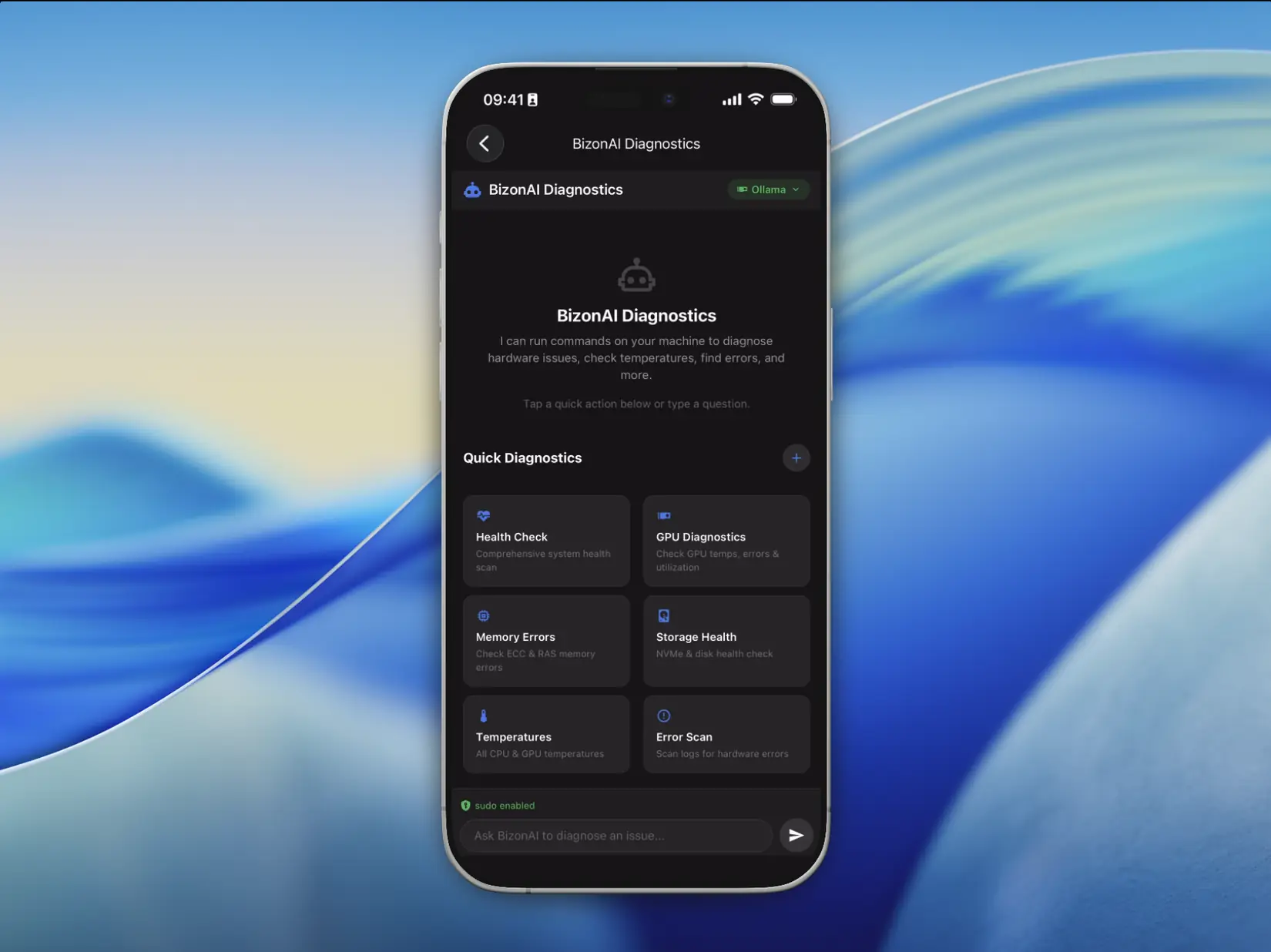

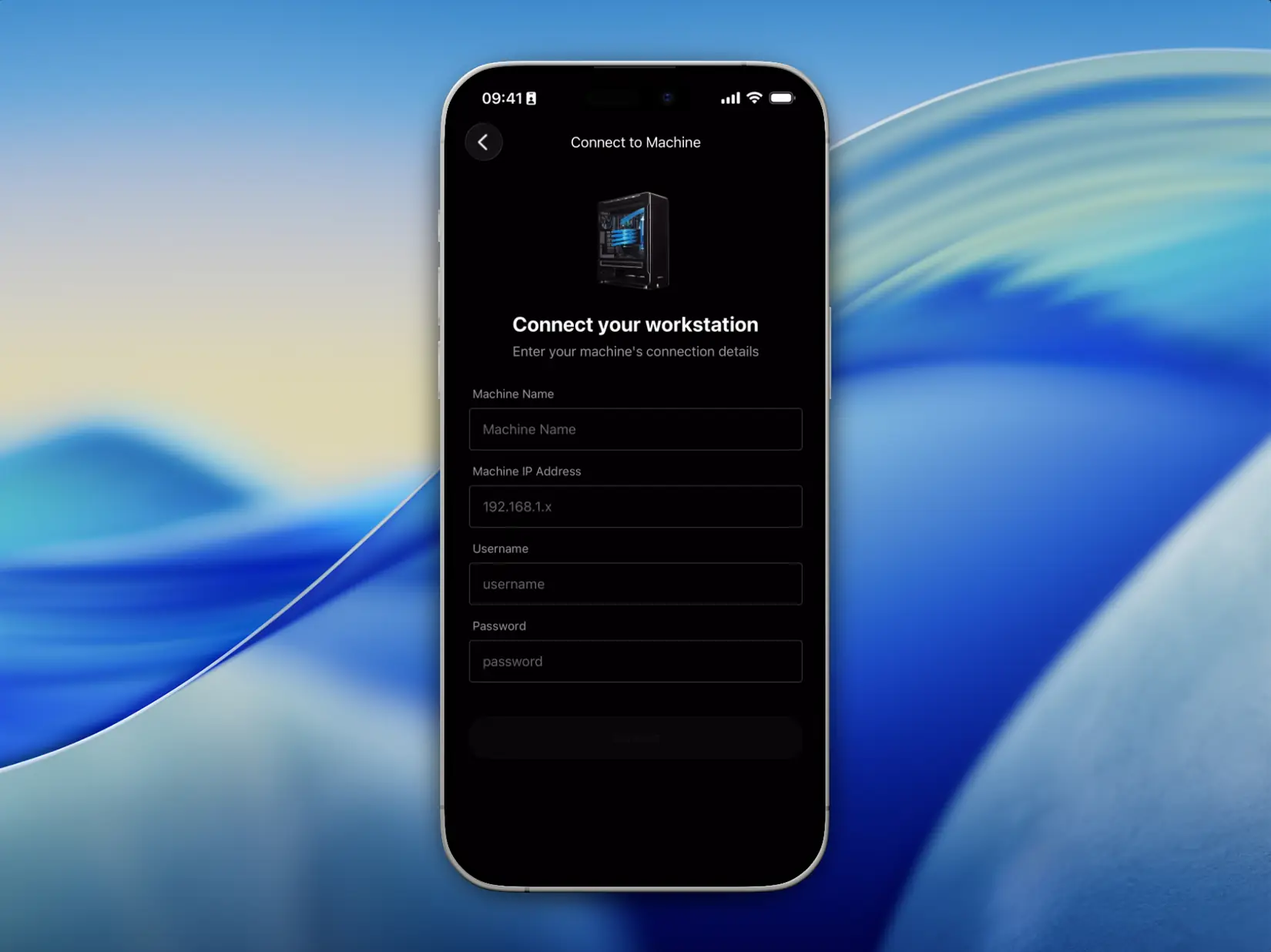

BIZON Remote Control

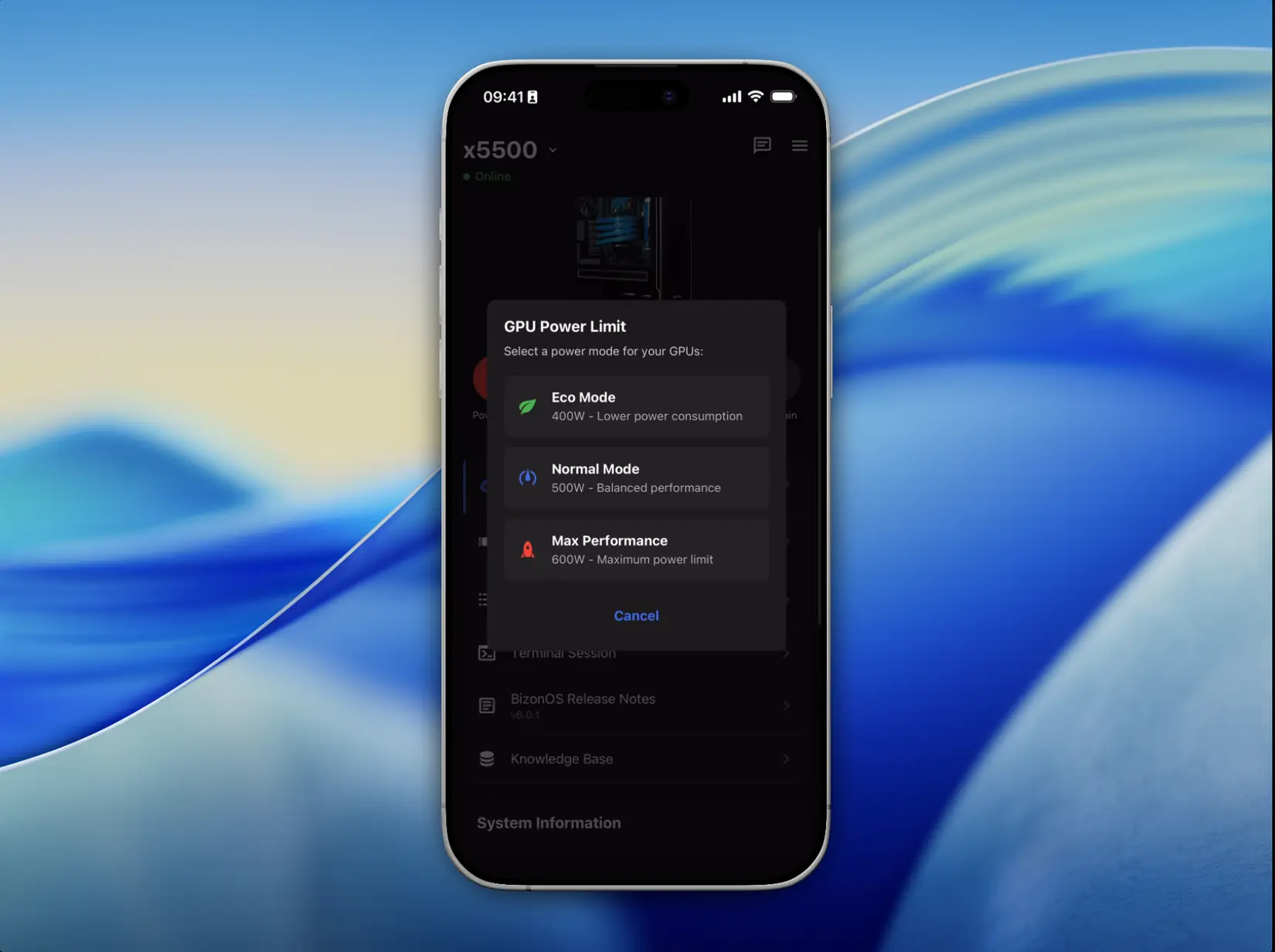

Control your AI workstation from your iPhone over your local network. The app communicates exclusively within your private network. Nothing is exposed to the internet, no external access, your hardware stays fully private.

- ✓ BizonAI Diagnostics: Talk to your server with natural language

- ✓ Choose AI backend: Claude, Ollama, or vLLM with model selection

- ✓ Custom Quick Actions: create reusable diagnostic prompts

- ✓ Real-time GPU monitoring with power, temperature, VRAM stats

- ✓ Full hardware specifications with live data

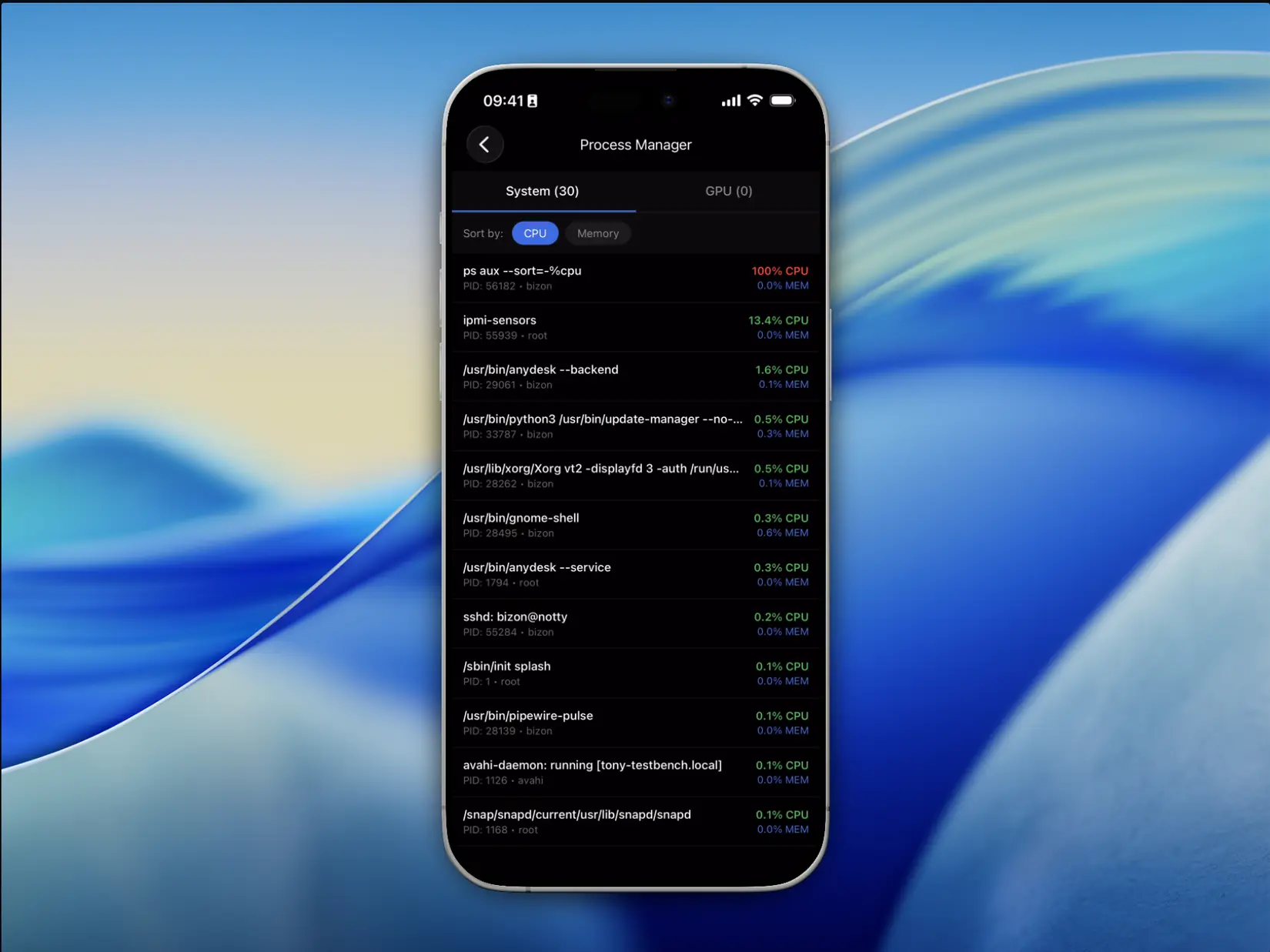

- ✓ Process manager: view and kill processes remotely

- ✓ Power controls: reboot, shutdown, GPU TDP management

- ✓ Multi-machine support: manage all BIZON systems

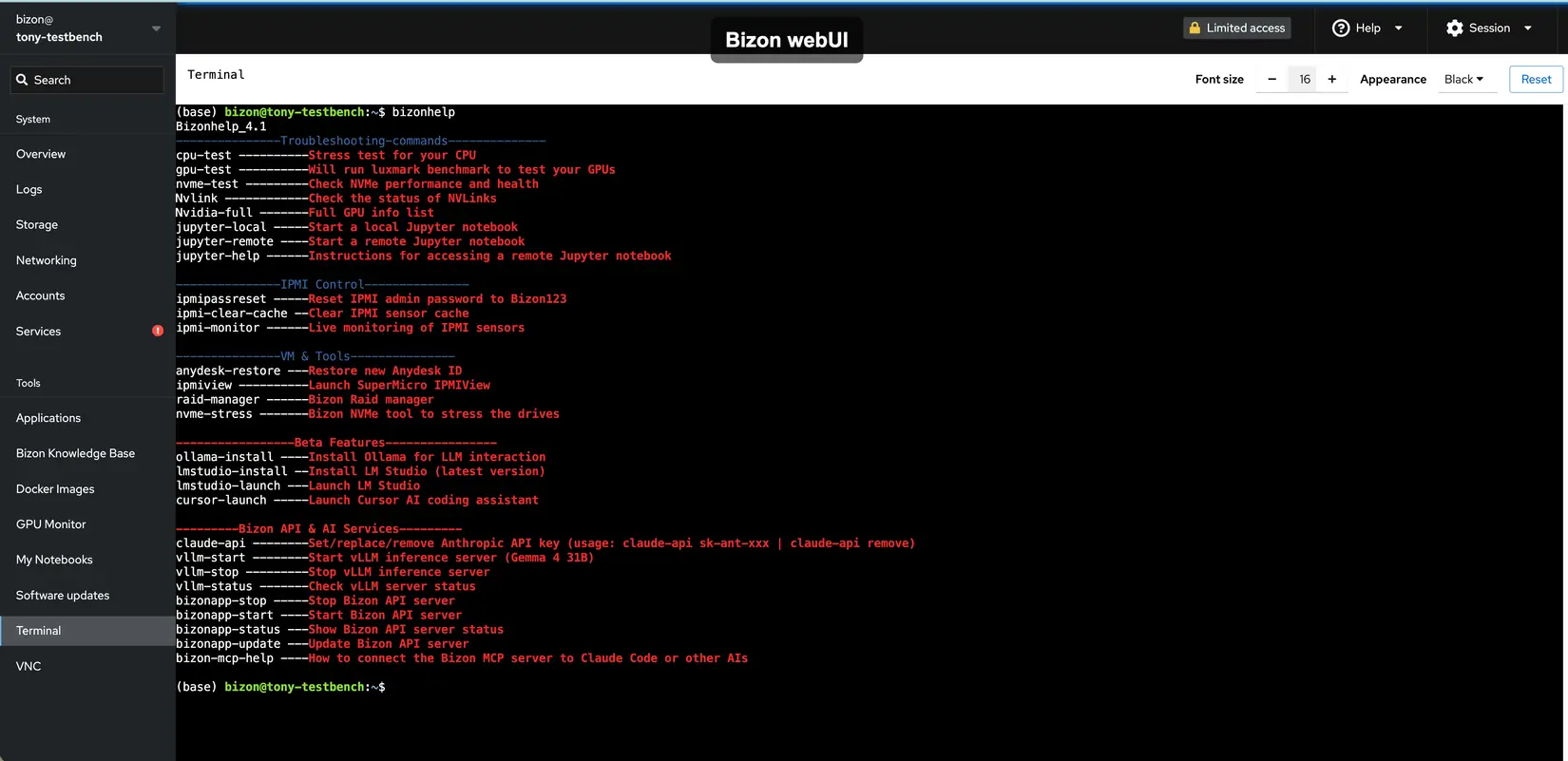

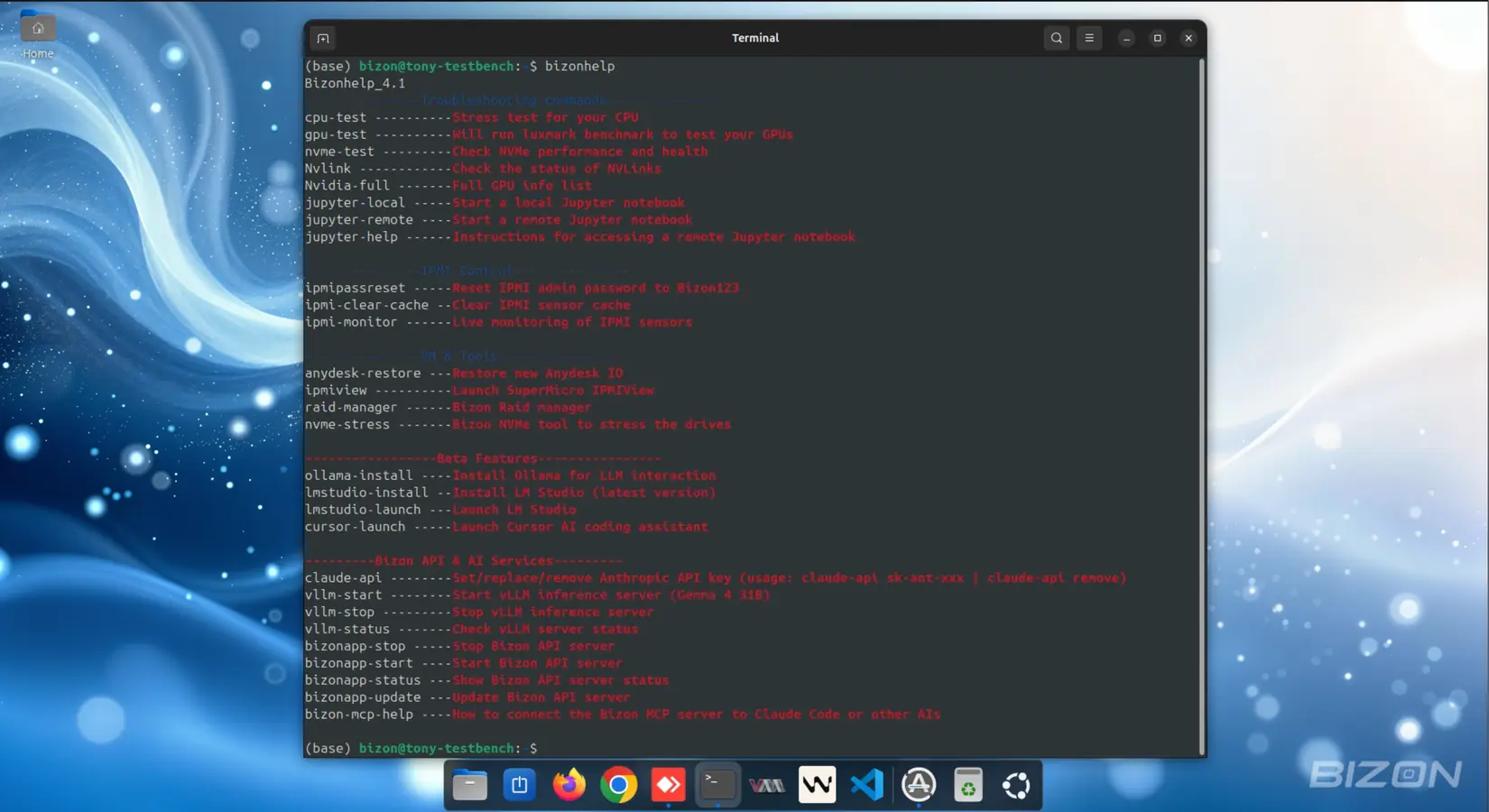

Bizonhelp & CLI Tools

Type

bizonhelp

in the terminal to access all tools. No documentation

needed.

- ✓ Diagnostics: CPU stress test, GPU benchmark (LuxMark), NVMe health & performance

- ✓ IPMI Control: Live sensor monitoring, password reset, cache clear

- ✓ Jupyter: Launch local or remote notebooks with a single command

- ✓ RAID & NVMe: RAID array management and NVMe stress testing

- ✓ AI Tools (Beta): Install & launch Ollama, LM Studio, and Cursor AI

- ✓ Bizon API & vLLM: Start/stop/update the API server and vLLM inference

- ✓ Claude API key setup and MCP server connection guide built-in

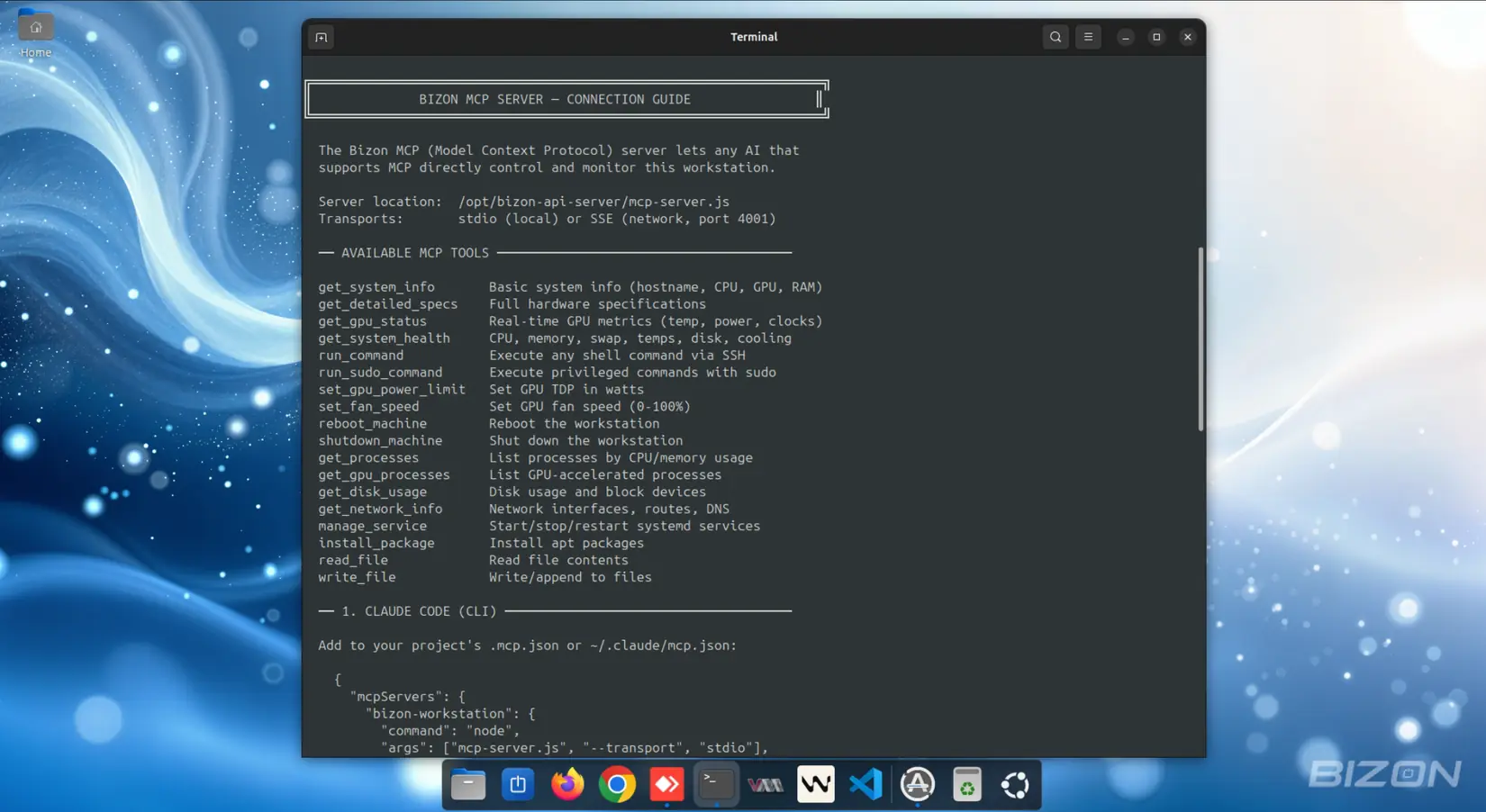

Bizon API & MCP Server

A RESTful API and Model Context Protocol server that runs entirely within your local network. No external exposure. AI coding assistants like Cursor, Windsurf, and Claude connect to your hardware privately and securely, without any internet access.

- ✓ RESTful API with SSH-based authentication

- ✓ MCP server for AI agent tool calling (Cursor, Windsurf, Claude)

- ✓ System info, GPU status, process management endpoints

- ✓ Remote command execution with sudo support

- ✓ Fan speed and GPU TDP control

- ✓ AI diagnostic chat with streaming responses

- ✓ File operations, service management, package installation

Full AI/ML Software Stack

Every tool, framework, and driver version carefully tested on BIZON hardware. No compatibility issues.

Molecular Dynamics Software Stack

Full MD, Cryo-EM, and structural biology stack preinstalled and tested on BIZON hardware. NVIDIA GPU-accelerated where supported.

Looking for a specific package? Our engineers can preinstall it. sales@bizon-tech.com

Optimized for the Latest NVIDIA GPUs

BizonOS adds day-one support for next-generation accelerators.

NVIDIA RTX PRO 6000 Blackwell

Next-gen Blackwell GPU for AI training at scale.

NVIDIA RTX 5090

Professional workstation GPU for large model training.

NVIDIA H200 B200 B300

Grace Blackwell superchip for massive parallel AI.

AMD MI350X

AMD Instinct accelerator for HPC and AI workloads.

BizonOS v6.0 Changelog

Released April 15, 2026. Based on Ubuntu 24.04.01 LTS.

What's New

- Kernel 6.8.0-107 (Xeon, EPYC 9000, Threadripper PRO 9000)

- NVIDIA drivers 595.58.03, CUDA-X AI 13.2

- PyTorch 2.11.0+cu130

- TensorFlow 2.21.0

- vLLM pre-installed for local LLM inference

- B200, RTX PRO 6000, GB300 (NVIDIA) + MI350X (AMD) driver support

- BizonAI support bot (desktop + mobile)

- New Bizon Desktop App redesign

- BIZON Remote Control iOS app v2.1.0

- API & MCP server for agentic AI

- IPMI tools built into the terminal

- Docker Desktop now available for Linux

Bug Fixes

- Fixed API service issues that could hang workstations

- Fixed CUDA initialization at kernel level for RTX 6000 Pro and H200 GPUs

- Fixed PCIe Gen5 issues on new motherboards. Kernel patched.

Common Questions About BizonOS

Can I run local LLMs on a BIZON workstation with BizonOS?

Yes. BizonOS ships with vLLM pre-installed and ready for production local LLM inference. You can run models like Llama, Mistral, DeepSeek, Gemma 4, Nemotron 3, Kimi 2.5, GLM-5.1, MiniMax-M2.7, Qwen 3.5, Qwen 3.6, and other open-source LLMs locally on your NVIDIA GPUs with zero cloud dependency. Ollama is also supported for easy local model management. Your data never leaves your network.

What AI and deep learning frameworks come pre-installed?

BizonOS v6.0 includes PyTorch 2.11.0 (CUDA 13.0), TensorFlow 2.21.0, vLLM for LLM serving, Anaconda Data Science Package, Jupyter Notebook, JupyterLab, and GPU-optimized Docker containers for all major frameworks. Everything is tested on your specific hardware configuration.

Which NVIDIA GPUs are supported?

BizonOS v6.0 supports all current NVIDIA GPUs including the RTX 5090, RTX PRO 6000, B200, GB300, H200, H100, and A100, plus driver-level support for AMD MI350X accelerators. NVIDIA driver 595.58.03 and CUDA 13.2 are pre-installed and optimized.

What is the Bizon API and MCP Server?

The Bizon API is a RESTful server that runs exclusively within your local network. Nothing is exposed to the internet. The MCP (Model Context Protocol) server allows AI coding assistants like Cursor, Windsurf, and Claude to interact with your hardware securely over your private network, running commands, checking GPU status, and managing services.

Do I need to install anything after receiving my BIZON workstation?

No. BizonOS comes fully pre-configured with all frameworks, drivers, tools, and apps. Just plug in, power on, and start developing. Every component is tested on your exact hardware configuration before shipping.

Why should I use BizonOS instead of installing my own Linux flavor?

You absolutely can install your own Linux. But here's what you'd be giving up:

Zero setup time. Getting NVIDIA drivers, CUDA, cuDNN, PyTorch, TensorFlow, Docker with GPU runtime, Jupyter, and vLLM all working together correctly on a fresh Linux install can take days. And that's if everything goes smoothly. BizonOS ships with all of it pre-installed, tested, and working on your exact GPU configuration from day one.

Hardware-optimized from the factory. Every BizonOS image is built and validated specifically for BIZON workstations. Driver versions, CUDA toolkit, kernel parameters, and GPU firmware are all tuned for your hardware. Not a generic configuration that may or may not work with your GPU count, NVLink setup, or NVMe array.

Built-in tooling you'd otherwise build yourself. BizonOS includes the Bizon Control Panel (web-based system management, containers, VMs, GPU monitoring, Jupyter launcher), the Bizon Desktop App (AI diagnostic assistant with local models), Bizonhelp CLI (stress tests, IPMI tools, RAID manager, AI service management), the Bizon API & MCP Server (connect Cursor, Windsurf, or Claude to your hardware), and the BIZON Remote Control iOS app. That's an entire software ecosystem you don't have to build or maintain.

Updates that don't break things. BizonOS updates are tested against your specific hardware before they reach you. With a DIY Linux setup, a driver update or kernel upgrade can silently break GPU access, CUDA compatibility, or Docker GPU runtime. Diagnosing it costs hours.

Local AI inference, ready to go. vLLM and Ollama are pre-configured so you can run Llama, Mistral, Gemma, DeepSeek, and other models immediately. No manual installation, no CUDA version mismatches, no dependency conflicts.

In short: BizonOS lets you spend your time on research and development, not system administration.